The smartest member of your developer ecosystem: Introducing the Mezmo MCP server

Building a great developer experience is about more than just the code. It’s about creating a unified ecosystem where your tools work together seamlessly. That’s been the vision behind our work on the Mezmo MCP Server, and I’m excited to share it with you.

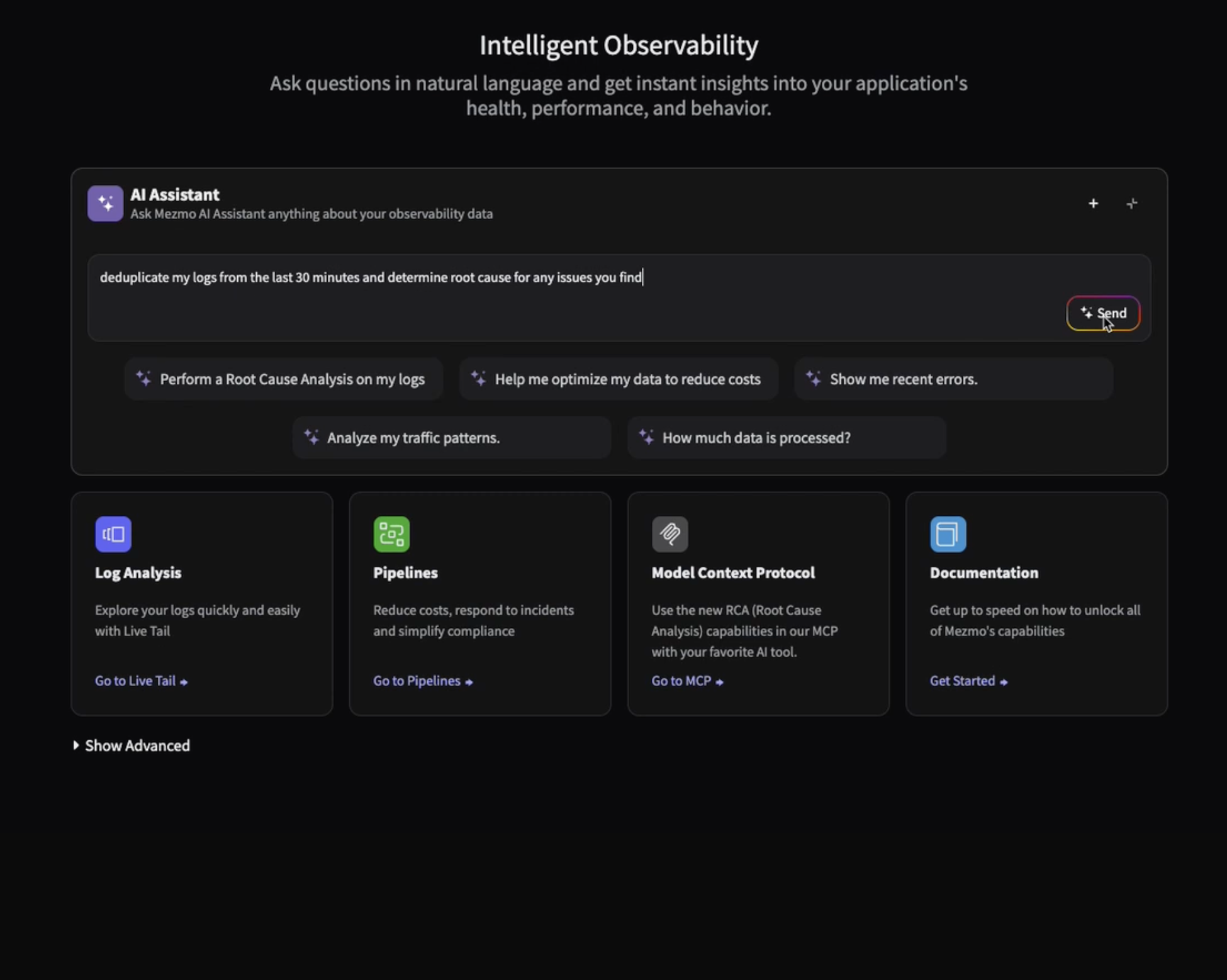

At its core, the MCP Server is a universal remote for your data pipeline. It provides a standardized, structured way for your entire developer ecosystem—your IDE, your AI assistants, your custom scripts—to access rich, contextual observability data from Mezmo. Instead of building one-off, brittle integrations, every tool just needs to know how to talk to the MCP Server. It's a common language that instantly makes your existing tools smarter.

A Zero-Friction Setup

We know that a great tool is useless if no one uses it. That's why we designed the MCP Server to be as frictionless as possible. There are no extra packages to install and no complex configurations to manage. The setup is simple: you just need a Mezmo account and an API key. Once you have that, you can connect the MCP Server to a growing list of tools.

For example, if you're using an IDE like Cursor, you can instantly empower it with Mezmo data just by providing the API key. We’re working hard to make this a reality for other tools in your ecosystem as well. This low-friction approach means you can go from "what is this?" to "this is incredible" in a matter of minutes.

Bringing Data to Your Workflow, Not Forcing You to Hunt for It

The real magic of the MCP Server is how it changes your workflow. You're no longer forced to leave your primary environment to perform a log search or check the health of a pipeline. The MCP Server brings that functionality directly to you.

Imagine this: you're working on a new feature in your IDE and you encounter an error. Instead of context-switching to a web-based dashboard, you can simply prompt your AI assistant within your IDE. Thanks to the MCP Server, that assistant can perform a powerful, contextual log search across your Mezmo data, identifying the root cause and even suggesting a fix—all without you ever leaving your code.

This isn't just a nice-to-have; it's a fundamental shift in how we debug and manage our systems. It reduces mental friction, accelerates root cause analysis, and keeps you focused on the task at hand.

Under the Hood (High-Level)

The Mezmo MCP Server is a remote, HTTP-streamable server designed for speed and reliability. It's built to handle the high volume of telemetry data Mezmo is known for and to serve it up in a highly consumable format. This "remote-first" deployment model means the server can be deployed anywhere and accessed by any tool with an internet connection, providing maximum flexibility and security.

This is just the beginning. The Mezmo MCP Server is a foundation we’re building on, and we’re excited to see how developers will use it to create smarter, more integrated workflows. We believe that by making observability data more accessible and contextual, we can help teams build, debug, and manage their systems with unprecedented efficiency.

We’re eager to hear your thoughts and see how you put the Mezmo MCP Server to work. What other tools would you like to see integrated with it?

Get Started with a Free Trial and try the Mezmo MCP server today.

Similar blog posts