Deliver AI-ready context with confidence: From systems to root causes

Are you challenged by:

- Underperforming AI agents that consume hundreds of thousands of tokens without delivering accurate root cause analysis

- The "Haystack" problem: Overwhelming your LLMs with excessive, noisy data, which dilutes critical signals and drives up token cost without providing accurate results

- Analysis paralysis that leads to slow, multi-prompt debugging when you need instant insight

- Fragmented pipelines that starve AI and engineering teams of a clean, contextual signal

Mezmo applies context engineering to shape telemetry in motion so teams and AI agents get the right data, with the right structure, at the right time, while cutting observability costs by 70-90%.

Traditional observability hoards data and makes you parse and correlate after an incident. Context engineering answers questions now by engineering the inputs that systems and agents need to be reliable, safe, and efficient.

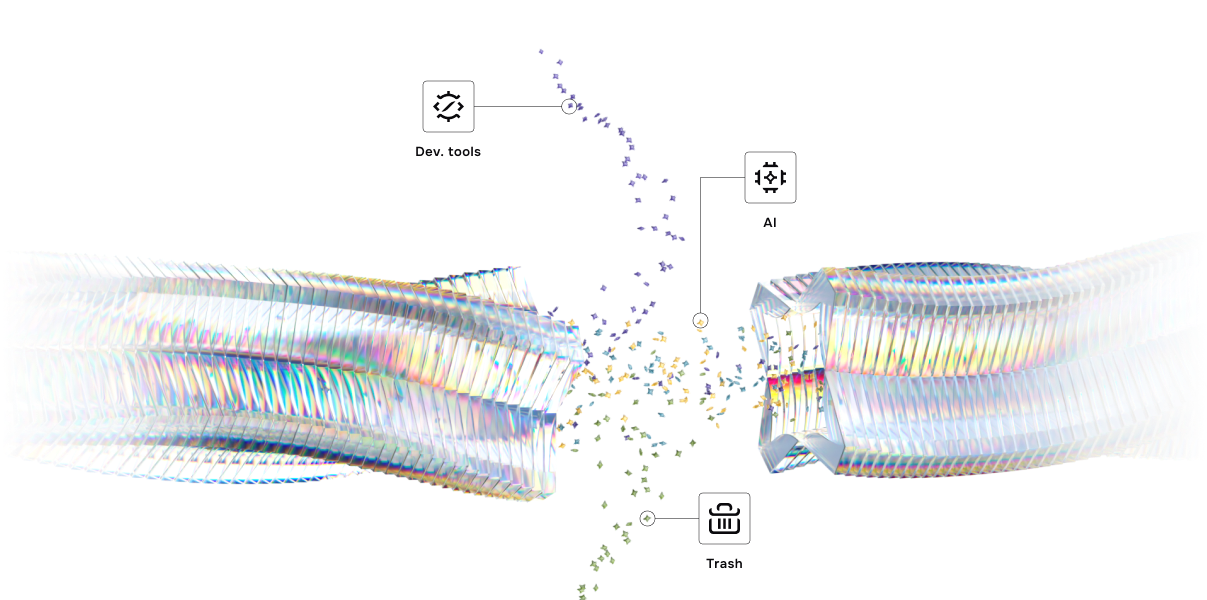

Key capabilities for context engineering

Feed agents high-fidelity context through Mezmo's MCP Server, context engine and native support for providers like OpenAI, Bedrock, and LangChain.

Automatically analyze log patterns and system behavior to identify incident causes, definitive answers, not guesses.

Data is processed and enriched at ingestion time, enabling AI agents and teams to immediately act on clean signals.

Deliver curated, scoped context to AI agents and destinations, replacing raw, noisy data dumps.

Automatically detect and isolate critical signals from high-volume, low-volume noise, allowing AI to focus solely on interpretation and recommendations.

Real results from real teams

From $1-$6 per incident to $0.06 due to prioritized context over excessive prompting.

- Mezmo benchmarking data

Reduce token consumption from 500K to ~27K per incident for low-cost, high-fidelity analysis that scales.

— Mezmo benchmarking data

Clean context beats clever prompting. Mezmo's context-first pipeline reduced prompt bloat and stabilized outputs, improving quality while cutting per-incident costs.

— AI Engineer

Explore more

Stop searching and start solving.

- ✔ Schedule a 30-minute session

- ✔ No commitment required

- ✔ Free trial available