How Telemetry Pipelines Save Your Budget

This is an updated version of an earlier blog post to reflect current definitions of a telemetry pipeline and additional capabilities available in Mezmo

Our recent blog post about observability pipelines highlighted how they centralize and enable telemetry data actionability. A key benefit of telemetry pipelines is users don't have to compare data sets manually or rely on batch processing to derive insights, which can be done directly while the data is in motion. As a result, teams get access to the data they need to make decisions faster.

Tip: For more information about the business value of observability pipelines, read our Observability Pipeline Primer.

Another key benefit of observability pipelines is that they help save on data storage costs. Organizations are generating more data, but budgets and value are not matching the data growth. Telemetry pipelines can play a central role in helping businesses reduce spending on data without losing needed insights. Organizations leveraging telemetry pipelines only store what they need and pay for what they use rather than trying to store everything.

Let's jump into some ways telemetry pipelines can save your budget by reducing spending and increasing efficiency.

Step 1: Reduce Volume To Downstream Tools

One of the most significant challenges when using traditional observability data tools is the cost of ingesting and storing large amounts of data. Teams historically directed all of their data to a SIEM or observability tools because it was the easiest way to ensure you could quickly access it, run queries, and get insights. But with modern environments leading to an explosion of data, these old paradigms are at odds with budgetary pressures. These legacy tools often don't have intuitive ways to filter or route data elsewhere or inform you of an overage until it has occurred. Add in you could spend tons of money only to leverage about 50-60% of your data because it's the only way you can easily access it. Ultimately, this leaves teams with an impossible choice between staying within budget and ensuring they have enough observable surface area.

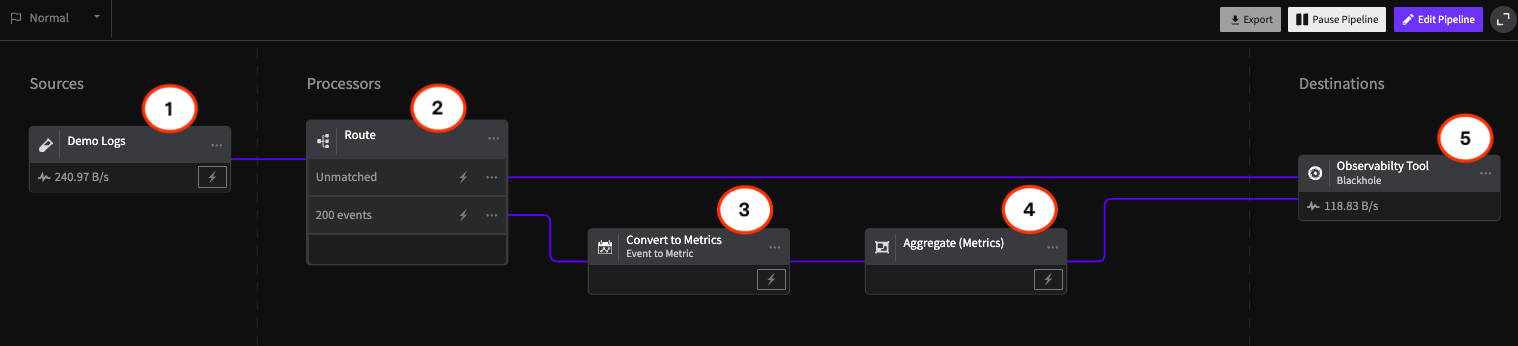

With a telemetry pipeline, you can evaluate each piece of data before it goes to a downstream destination–like deduplicate, filter, or enhance it to remove useless data from your stream. You can also get in-stream alerts to stop or slow down the data flow before you incur an overage at your destination. This ensures that you're using and deriving value from the data you need rather than paying to store unnecessary data.

Step 2: Optimize Data Before Sending to Downstream Tools

Reducing data volumes sent to downstream tools is an easy first step to starting to save.

It gets even better, though.

Telemetry pipelines allow you to pick and choose exactly where your data flows, meaning you don't have to pay for the same data in multiple destinations.

While you can drop some data from your stream to avoid unnecessary costs, you still need to retain some types for compliance purposes. For example, some companies require storing multiple months of customer log data in the event that they need to troubleshoot issues. In the past, all of this data was routed to expensive legacy systems to ensure their availability.

With a telemetry pipeline, teams can easily segment compliance data and route it directly into cheap object storage, such as Amazon S3, instead of passing it through a more expensive platform. This allows your team to stay compliant by having historical data, and it saves your budget by bypassing more expensive data destinations.

Step 3: Optimize Engineering Time

It's important to remember you can also measure cost savings not only in terms of data volumes but in time saved by optimizing relevant data flow to your teams. Since you are only sending the data you need downstream, and you're confident that all of it is valuable and actionable, you will see significant increases in the productivity of your teams. The most common metrics that see decreases are Mean Time to Detection (MTTD) and Mean Time to Resolution (MTTR).

Using telemetry pipelines to optimize for engineer productivity also has impactful knock-on effects. By reducing the time your teams spend on detecting and resolving issues, you are giving them more time to focus on critical tasks, such as creating your next big product or designing innovative new features. Furthermore, this increased ability to detect and resolve issues quickly can save you millions as your IT and Ops teams watch against cyberattacks and system failures that can lead to problematic data breaches and leaks.

Step 4: Enjoy The Benefits Of Pipeline!

Beyond cost savings, organizations that leverage telemetry pipelines gain a competitive advantage in that they can accelerate their decision-making and innovation. Resources that would normally be spent on tools and storage for finding and fixing issues can instead be invested in the products themselves. We believe this is why all organizations should take a hard look at how they’re advancing their observability plans and evaluate the benefits they could achieve by implementing telemetry pipelines.

Tip: To learn more about the factors you should consider when looking for an observability pipeline, check out our white paper, The Decision Maker's Guide to Choosing an Observability Pipeline.

We built our telemetry Pipeline on the foundation of first-generation pipeline solutions emphasizing data aggregation and routing. Our open platform supports various data sources and destinations, and comes with a number of processors to help you shape data (such as sampling, deduping, and filtering) to easily filter signal from noise. The result is more actionable data to improve efficiency. With easy-to-configure routing rules, you can optimize the flow of your data to help cut costs.

Try Mezmo telemetry pipeline today.

Similar blog posts