LogDNA and IBM Find Synergy in Cloud

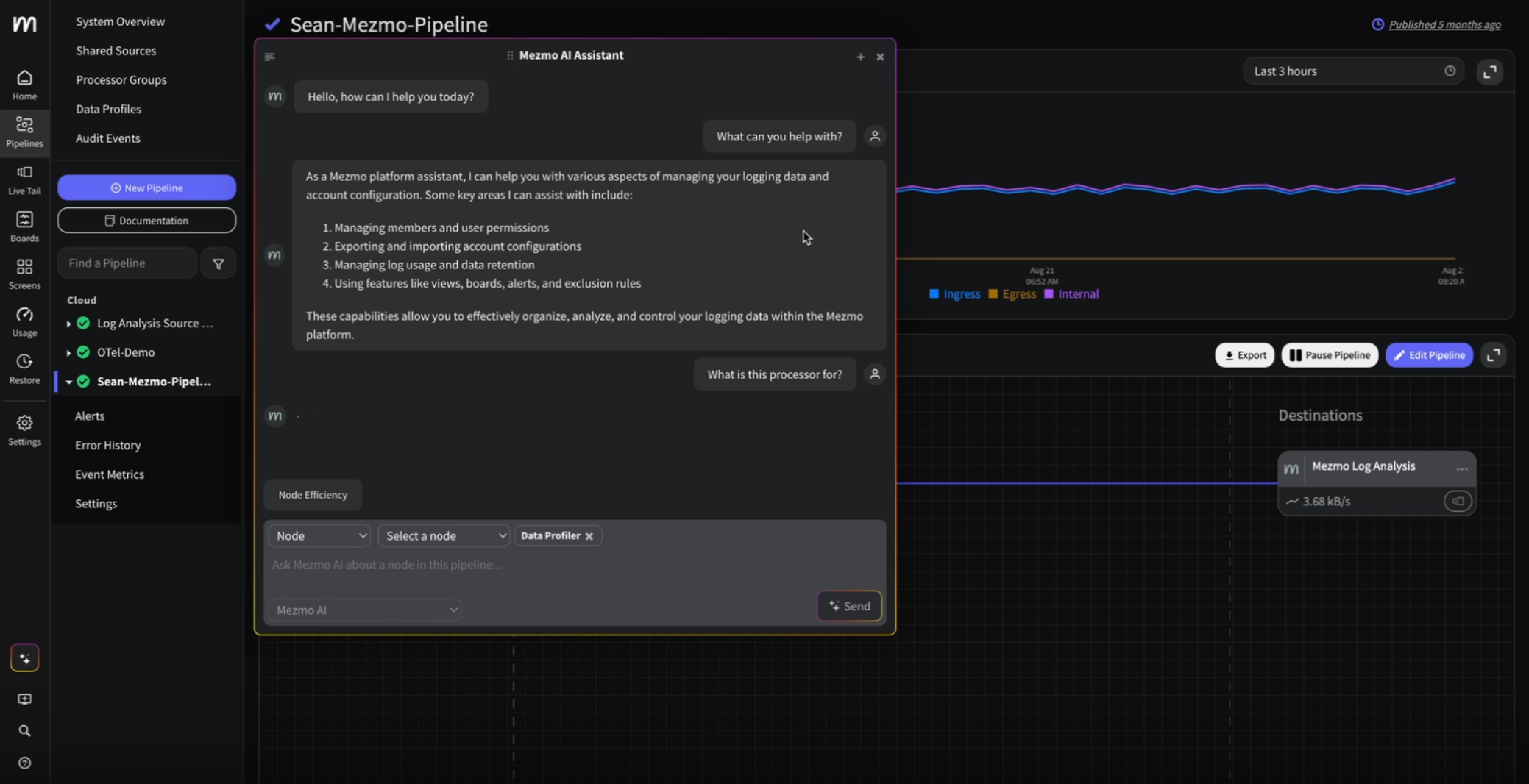

LogDNA is now Mezmo but the product you know and love is here to stay.

First published on www.ibm.com on October 7, 2019.

Written by: Norman Hsieh, VP of Business Development, LogDNA

You know what they say: you can’t fix what you can’t find. That’s what makes log management such a critical element in the DevOps process. Logging provides key information for software developers on the lookout for code errors.

While working on their third startup in 2013, Chris Nguyen and Lee Liu realized that traditional log management was wholly inadequate for addressing data sprawl in the modern, cloud-native development stack. That epiphany was the impetus for LogDNA, a twist on a logging platform that could respond and scale in dynamic cloud environments.

Pivoting to meet DevOps need

What was straightforward when writing code for one server became unwieldy as virtualization, with multiple servers in a single machine, moved into the data center and exploded the amount of log files. As applications grow, so do code issues. And as pressure mounts on IT for zero downtime, developers need real-time visibility and an easy way to chase data activity. Containers help isolate issues, but they still largely depend on the IT infrastructure team who are unfamiliar with the applications to manage logging, and that can tax limited resources.

Building on top of the popular elasticsearch, LogDNA set out to create a solution modernized enough to provide DevOps intelligence and automatically organize all that data. Fortunately, the LogDNA team saw the writing on the wall: Kubernetes. The timing was perfect. Developers were starting to adopt the lightweight, open source platform for managing containerized workloads. LogDNA seized on the advanced orchestration capabilities of Kubernetes in cloud environments and spun out an integrated, managed software-as-a-service (SaaS) solution that began to gain traction.

Looking for a strong partner

This work caught the attention of the IBM Cloud team, which itself was shifting focus to Kubernetes services and DevOps and working actively in the open source community. We quickly found synergy between our efforts. That synergy helped weave the LogDNA logging platform into the IBM global ecosystem, binding the two companies together as partners to deliver innovative, managed Kubernetes services.

While LogDNA is in the business of logging, it is also a storage and big data company. When the team initially looked at the IBM Cloud Kubernetes Service, they worried that it wasn’t going to meet our demands. Fortunately, several IBM distinguished engineers introduced the LogDNA team to the IBM Cloud Kubernetes Service bare metal offering.

The flexibility of a bare metal option allowed LogDNA to get the IOPS (input/output operations per second) we need to read and write quickly out of storage, and at a less-expensive price than network-based storage.

The IBM-LogDNA relationship is an example of true collaboration. IBM has quickly responded to product developments recommended by the LogDNA team. However it’s not only IBM delivering enhancements; LogDNA has adjusted some process and infrastructure planning based on suggestions from the IBM team. The refinement of the logging-related offerings is a chance for the LogDNA and IBM teams to work hand-in-hand to build better services.

Getting to the heart of DevOps

LogDNA’s product is heavily focused on the DevOps space. It provides better insights, better observability into development stacks and better building tools that help developers. It offers the convenience of a very robust log management tool without the inconvenience of having to manage or configure anything.

In a world that churns out disruptive direct-to-consumer applications (think Uber), there is a growing market for a level of scalability not being addressed by others in the market today.

With the combination of IBM and LogDNA, we can help clients no matter where they are on their journey — public clouds, on-premises or hybrid. The goal is to provide a log management tool that optimizes a developer’s data. The LogDNA tool focuses heavily on things like automatic parsing. Any data that comes into LogDNA is automatically taken care of, since the tool can recognize the exact kind of incoming logs. Our tool bundles its services for simplicity and ease of use, so developers don’t need to worry about logs.

Partnering around the world

The IBM global footprint is enabling LogDNA to deliver this service consistently around the world. As the preferred logging service, LogDNA is available in the IBM Cloud Service Catalog today and will be available in all IBM service regions. IBM customers can select and order LogDNA services by identifying that they want logging; this pulls LogDNA directly into their order.

In addition to customer-requested orders, IBM plans to use LogDNA for all of its internal systems, which further extends the relationship.

Ultimately, the joint effort of IBM and LogDNA is helping our customers stay focused on their priorities, and leave the logging to us.

To learn the full story, read the case study.

.png)

.jpg)

.png)