Top Five Reasons Telemetry Pipelines Should Be on Every Engineer’s Radar

You’ve probably felt the pain: data pouring in from every corner of your stack, tools choking on volume, dashboards lagging behind reality, alerts firing (or worse, not firing) without context. If that sounds familiar, it’s time to get serious about telemetry pipelines.

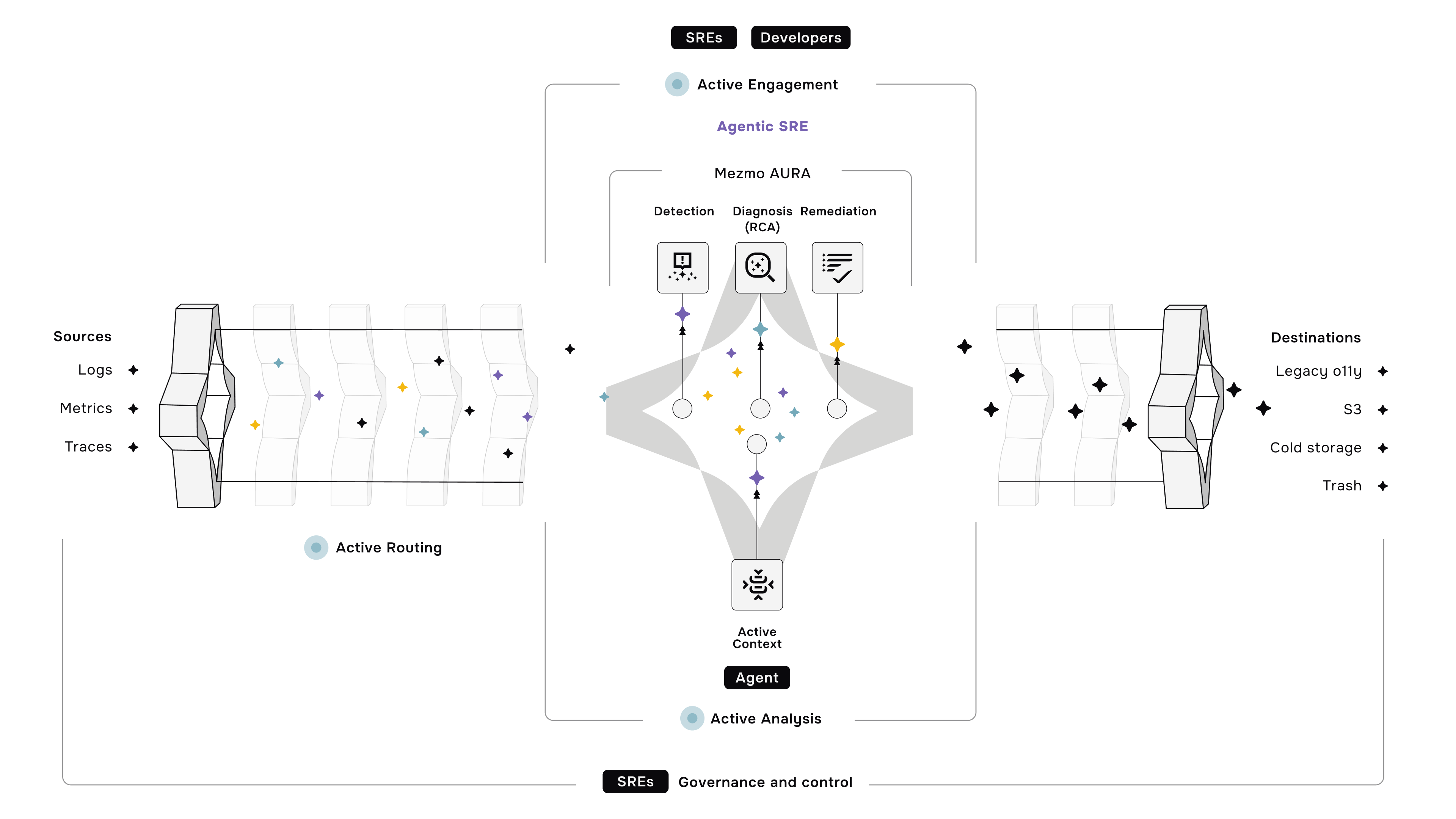

Whether you're an SRE trying to stabilize a flapping service or a developer navigating multi-cloud chaos, a telemetry pipeline helps you take control of the data firehose. It’s not just plumbing—it’s a strategic foundation for observability, cost control, and operational agility.

Let’s break down five critical use cases where telemetry pipelines can make or break your engineering efficiency.

1. Data Volume Management: Send Less, Learn More

Ingesting everything is tempting. But when platforms like your APM or SIEM start throttling or your observability bill spirals out of control, reality hits.

A telemetry pipeline lets you filter, route, and shape data before it ever reaches your tools. Strip out debug logs in production. Drop metrics from noisy endpoints. Route only high-value telemetry to long-term storage. Suddenly, you're no longer fighting ingestion limits or paying to store garbage.

This is especially useful when:

- You're close to exceeding ingestion quotas on a SaaS monitoring platform.

- Your storage layer is buckling under logs you never read.

- You’re drowning in low-value events that add noise without insight.

A well-tuned pipeline ensures you capture what matters—and nothing else.

2. Real-Time Insights: Stop Waiting for the Truth

The faster you can see what’s going wrong, the faster you can fix it.

Telemetry pipelines can transform raw data at ingestion, enabling real-time alerting, anomaly detection, and live dashboards. Whether you’re feeding Grafana, Kibana, or a custom dashboard, you get faster insights without waiting for batch jobs to crunch data downstream.

For production systems, this can be the difference between a quick fix and a customer-facing incident.

Use cases include:

- Powering real-time SLO dashboards with accurate metrics.

- Supporting instant alerts based on structured logs or trace events.

- Visualizing changes in application behavior the moment they happen.

Live data isn’t a luxury. It’s table stakes for modern reliability engineering.

3. Toolchain Optimization: Make Your Stack Work for You

The average engineering org uses a mix of APMs, SIEMs, logging platforms, and tracing tools. But each of these tools has its own quirks, ingestion formats, and cost models.

A telemetry pipeline helps normalize data formats and preprocess telemetry before it hits expensive tools. Want to route the same data to both an open-source backend and a paid vendor? Done. Want to trim down payloads before sending them to your SIEM? Easy.

This isn’t just about saving money—though that’s a big plus. It’s about decoupling your telemetry architecture from any single vendor, so your data strategy stays flexible as your stack evolves.

4. Contextual Troubleshooting: See the Full Picture

Logs without context are just noise. Metrics without dimensions are blind. A telemetry pipeline can enrich your telemetry with metadata, such as container IDs, region tags, deployment versions, and user sessions, before it even reaches your toolchain.

Now, when something goes sideways, you're not playing "grep and guess." Instead, you’re navigating a correlated, searchable, and tagged dataset that gives your team a shared source of truth.

This is crucial for:

- Cross-team debugging where infrastructure and app logs need to tell the same story.

- Post-incident reviews where you want clarity, not chaos.

- Reducing mean time to resolution (MTTR) through smarter, more targeted insights.

5. Security and Compliance: Ship Data Responsibly

Telemetry pipelines can also act as a first line of defense for data privacy and regulatory compliance. You can redact PII before logs are written, enforce retention policies by routing data based on its classification, or unify telemetry across systems to support threat detection.

Security engineers love pipelines for exactly this reason—they give you proactive control over what gets logged, stored, and analyzed.

Example scenarios:

- Removing sensitive fields, such as access tokens or user emails, from logs.

- Routing logs with audit data to dedicated storage with restricted access.

- Enabling better threat correlation by enriching logs with infrastructure metadata.

In short, pipelines help you build observability that’s both powerful and responsible.

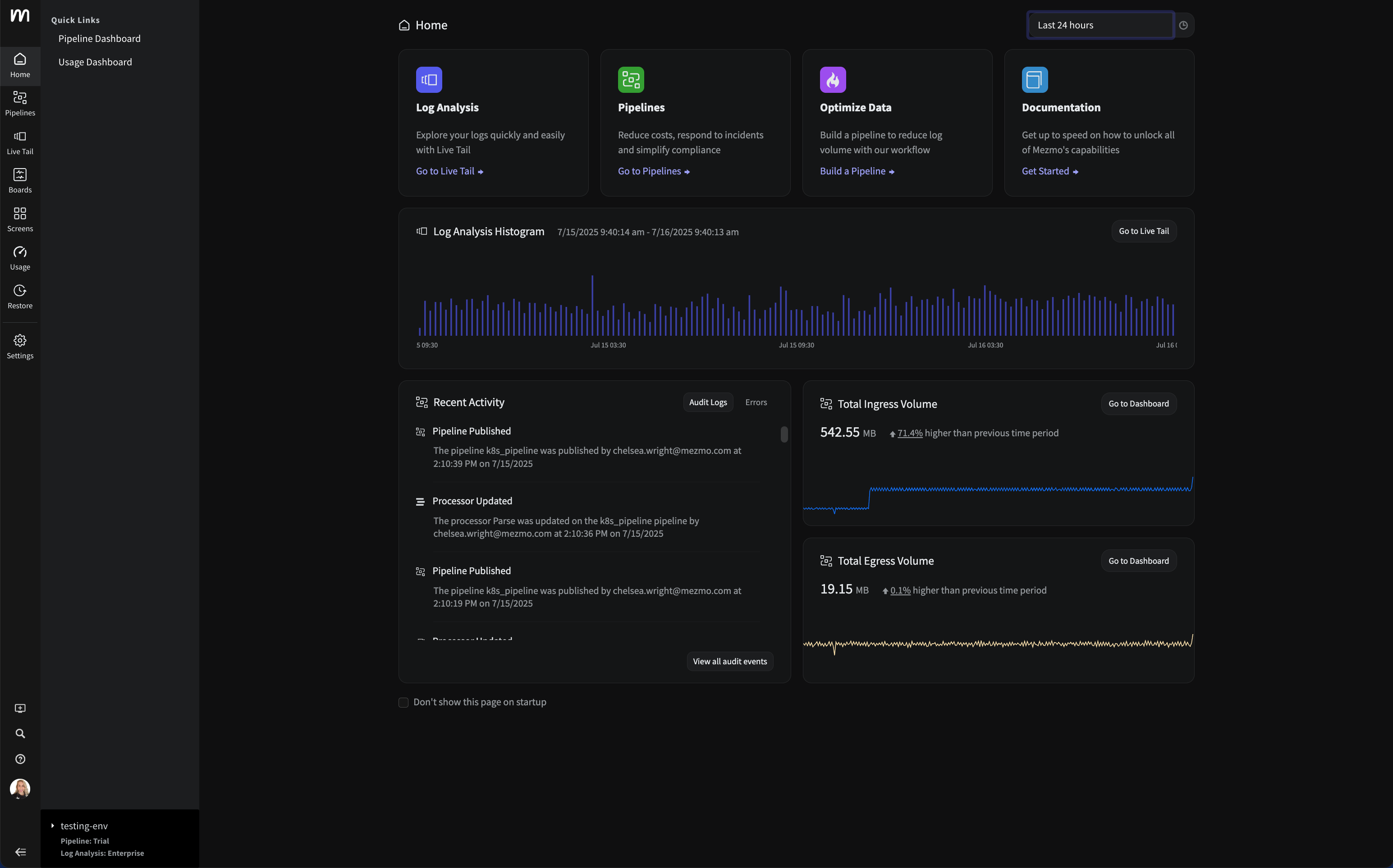

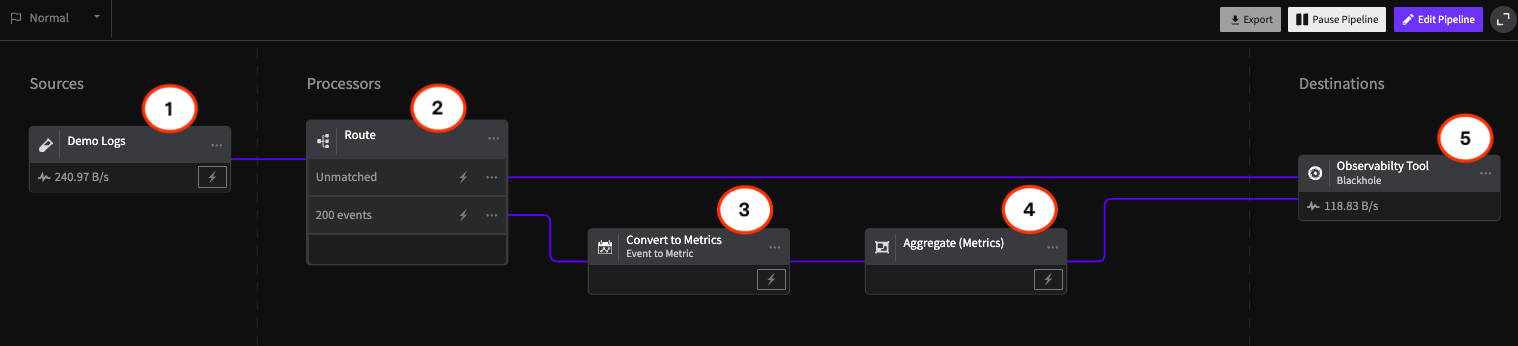

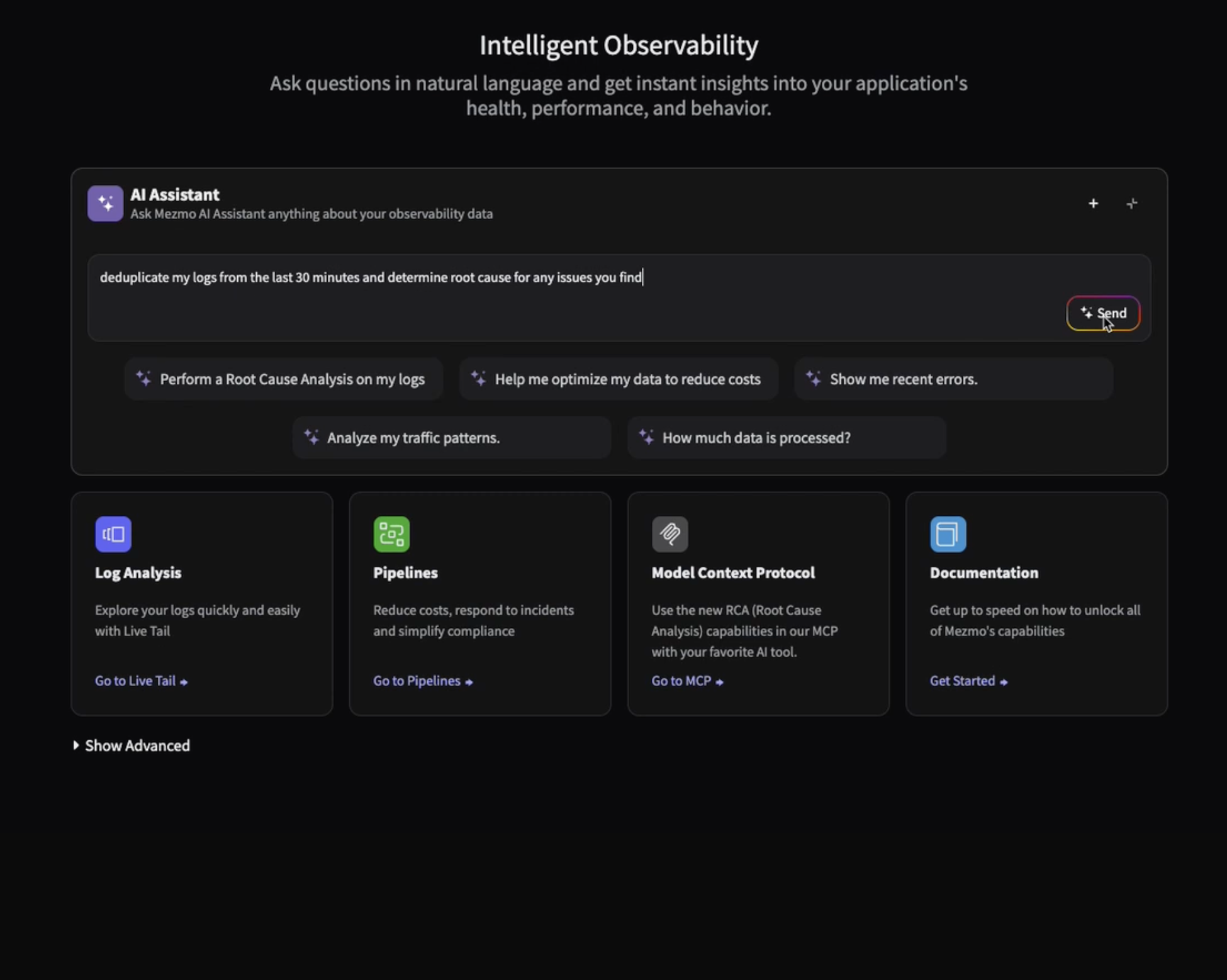

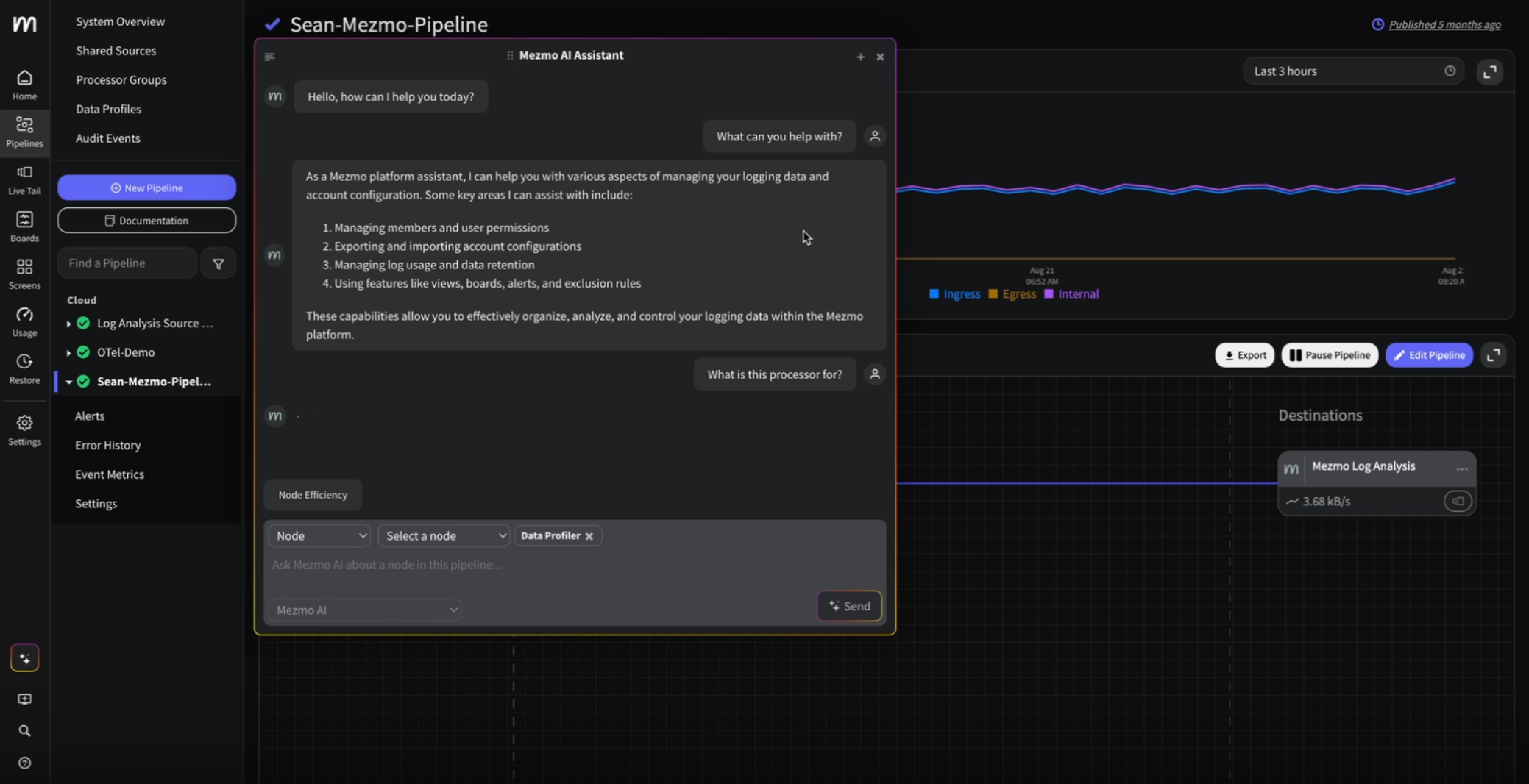

A Quick Look: Configuring a Telemetry Pipeline in Mezmo

Here’s a screen capture that shows how easy it is to configure filters, transformations, and routing logic using Mezmo’s visual interface. Whether you’re enriching logs with Kubernetes metadata, reducing Prometheus metric volume, or branching telemetry to multiple destinations, the process is intuitive and code-optional.

TL;DR: Your Observability Strategy Needs a Pipeline

Telemetry pipelines are no longer a “nice to have”—they’re essential. They give you control over your data, clarity in your operations, and confidence in your tooling. If you're still routing telemetry directly from source to sink, you're missing the chance to optimize, secure, and scale your observability the smart way.

Ready to dig in? Your future self (and your budget) will thank you. Start with a small win—like trimming log volume—by signing up for a free trial of Mezmo today.

.png)

.jpg)

.png)