How to Cut Observability Costs with Synthetic Monitoring and Responsive Pipelines

Platform teams are struggling with observability noise, bloated storage costs, and lack of clarity during incidents. Most teams capture everything all the time, leading to expensive, overwhelming, and often unnecessary data volumes.

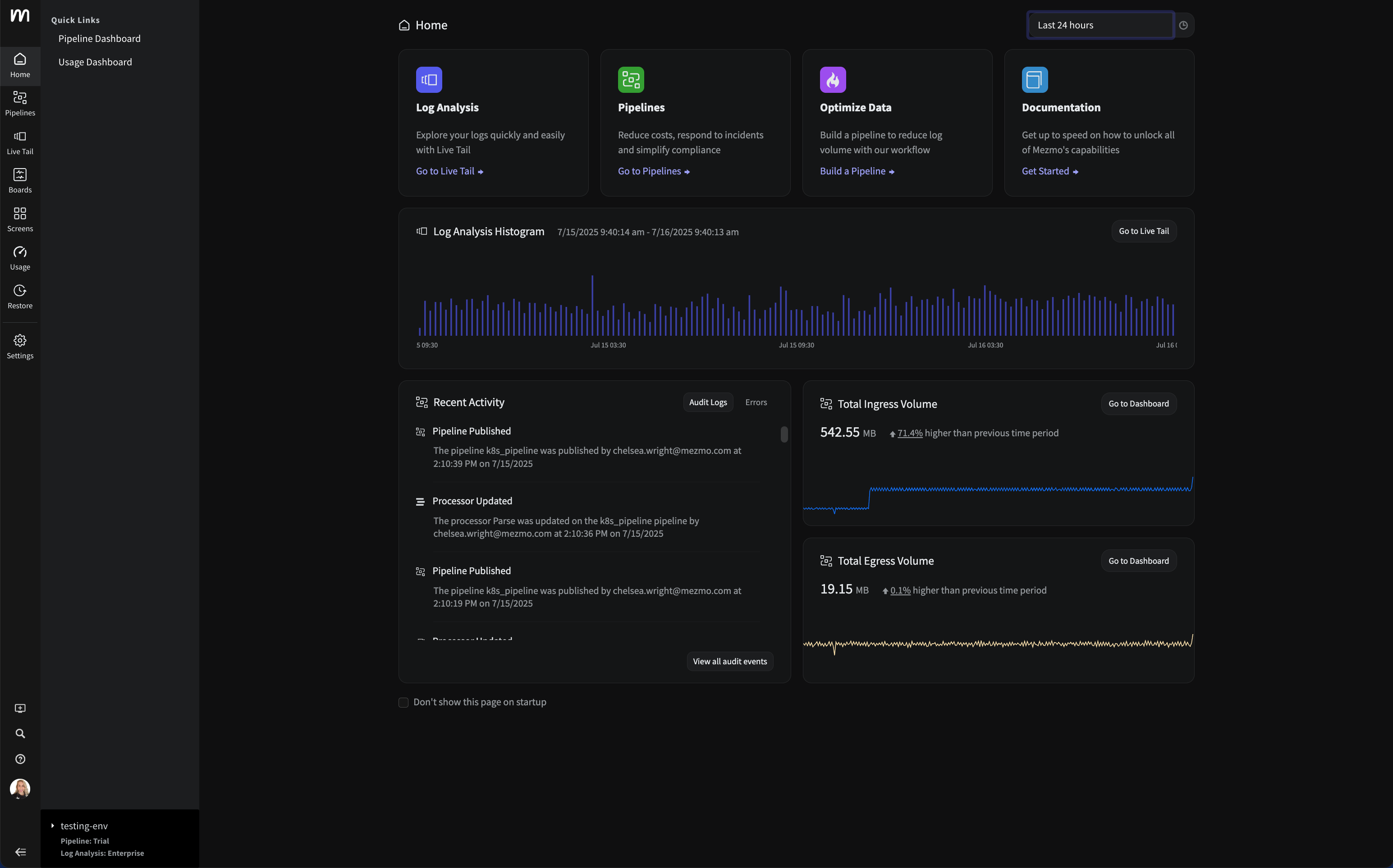

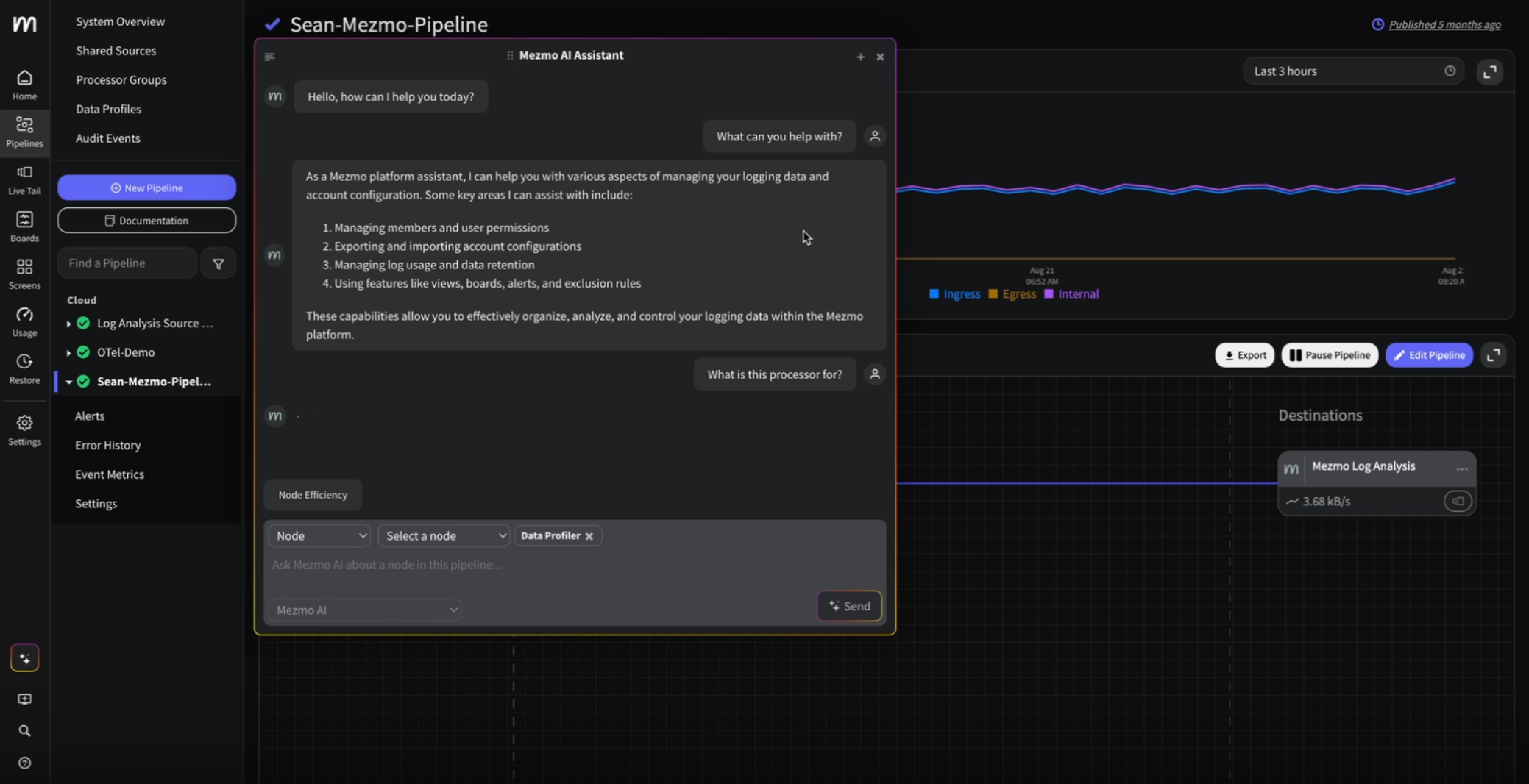

In Telemetry for Modern Apps, Mezmo teamed up with Checkly to demonstrate how synthetic monitoring triggers and responsive telemetry pipelines can help reduce costs while maintaining the context needed during incidents.

Below, we break down the technical implementation, performance benchmarks, and operational benefits for platform engineering teams.

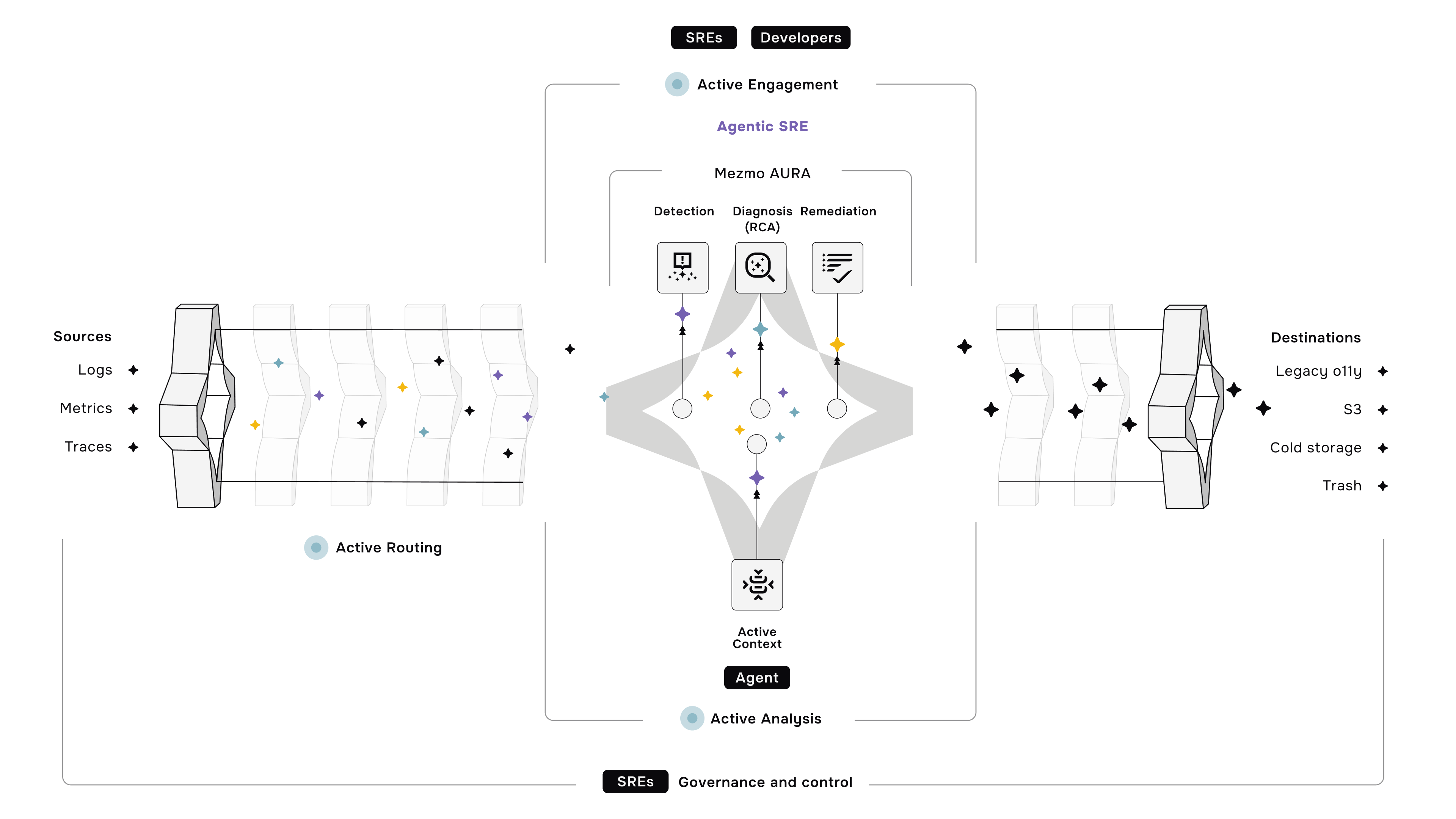

Responsive Telemetry: From Always-On to Event-Driven

Traditional observability platforms operate on a "capture everything, always" model. This creates operational challenges:

- Cost scaling: Observability costs linearly increase with traffic volume

- Signal degradation: Important alerts can get buried in low-priority noise

- Storage overhead: Most indexed data is never accessed during incidents

- Query complexity: Searching through large data volumes and query results during time-sensitive incidents

Instead of constant high-fidelity ingestion, responsive pipelines use synthetic monitoring signals as intelligent triggers. Critical user flows are monitored continuously at full fidelity, while background telemetry operates under aggressive sampling until an incident occurs.

The result: full observability coverage when needed, minimal cost during normal operations.

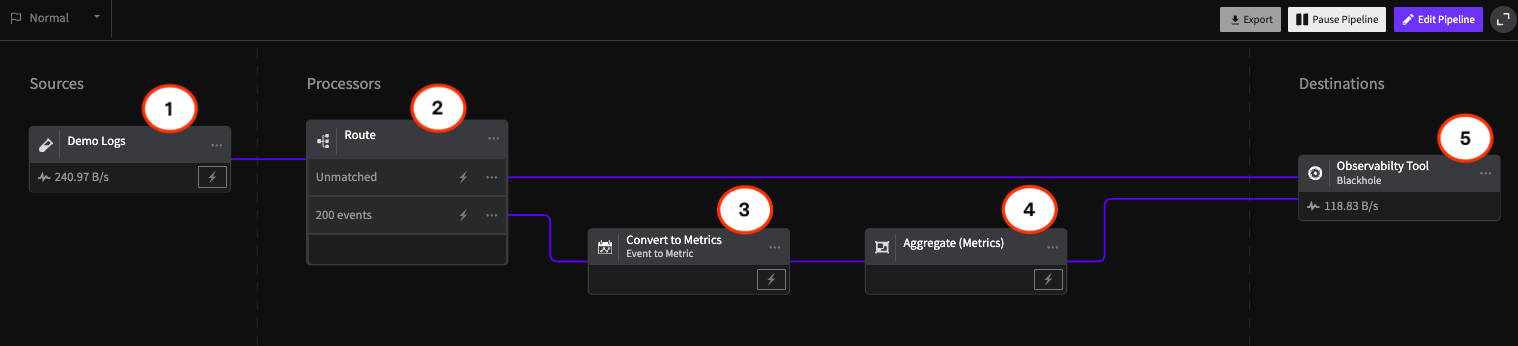

Technical Architecture: Synthetic-Driven Pipeline Controls

Here's the implementation we demonstrated using a sample e-commerce application:

Data Collection Layer

- All telemetry (synthetic + real-user) collected via OpenTelemetry collectors

- Synthetic traces from Checkly include custom headers: x-checkly-check-id and x-synthetic-flow

- Real-user traces tagged with session identifiers and geographic routing

Pipeline Processing Logic

Normal Operations:

Synthetic Traces → Full Fidelity Processing → Primary Destinations

Real-User Traces → Head-Based Sampling (1:10) → Sampled Storage

During Incidents:

Incident Detected → Dynamic Rule Override → Full Capture Mode

↓

All Traces → Full Fidelity Processing → Incident Response Tools

Sampling Strategy

- Normal operations: 10% head-based sampling for real-user traces

- Synthetic flows: 100% retention with priority routing

- Incident response: Sampling disabled for affected services, historical buffer playback enabled for the duration of the incident

Dynamic Incident Response

When synthetic checks fail, Mezmo pipelines automatically:

- Lift sampling restrictions for the affected service cluster

- Enable buffered log playback (15-minute historical window)

- Route high-priority traces to incident management tools

- Increase log retention for post-incident analysis

Performance Results

During the live demo with a sample e-commerce application:

- Telemetry volume reduction: Cut by over 10x through intelligent sampling

- Sampling strategy: 1-in-10 head-based sampling for real-user traces

- Synthetic coverage: Full-fidelity monitoring maintained for critical flows

The demonstration showed how synthetic monitoring provides consistent, predictable signals that enable precise pipeline control.

Operational Benefits for Platform Teams

Cost Optimization

- Reduce telemetry volume through intelligent sampling

- Send full-fidelity data only when needed

- Avoid paying to retain data that's rarely accessed during incidents

Incident Response

- Get full context during outages without constant high-volume ingestion

- Access historical data through buffered playback when incidents occur

- Focus on relevant signals instead of searching through noise

Engineering Productivity

- Platform teams spend less time managing observability costs

- Developers get debugging context when needed, minimal noise otherwise

- Clear separation between synthetic validation and user behavior analysis

Real-World Example: Catching Edge Cases

During the demo, a Checkly synthetic check passed (checkout flow completed), but trace analysis revealed a 404 error loading the shopping cart SVG icon. This wouldn't trigger traditional availability alerts but could impact conversion rates.

This demonstrates responsive telemetry's advantage: comprehensive coverage of critical paths without drowning in irrelevant data. The SVG issue was visible in synthetic traces at full fidelity, while similar issues in real-user traces might have been sampled out.

Implementation Considerations

Trace Routing

- Use OpenTelemetry semantic conventions for consistent tagging

- Implement trace context propagation for end-to-end synthetic flow tracking

- Configure separate processing pipelines for synthetic vs. real-user data

Pipeline Configuration

- Set sampling rates based on service criticality and traffic patterns

- Configure buffer sizes for historical playback (balance memory vs. context depth)

- Implement circuit breakers to prevent pipeline overload during incidents

Monitoring the Monitor

- Track synthetic check reliability and geographic distribution

- Monitor pipeline processing latency and throughput

- Set alerts on sampling rate changes and buffer utilization

Why This Approach Works for Platform Engineering

Most observability vendors optimize for data ingestion volume, not operational efficiency. This creates misaligned incentives where cost control requires reducing visibility.

Synthetic-driven responsive pipelines flip this model:

- Proactive monitoring of critical user journeys at full fidelity

- Reactive scaling of telemetry collection based on actual incidents

- Predictable costs that scale with business logic, not raw traffic

Platform teams gain granular control over the observability/cost tradeoff without sacrificing incident response capability.

Next Steps

The Mezmo + Checkly integration demonstrates how modern telemetry pipelines can be both cost-effective and operationally robust. By using synthetic monitoring as an intelligent control plane, platform teams can achieve better incident outcomes while reducing observability spend.

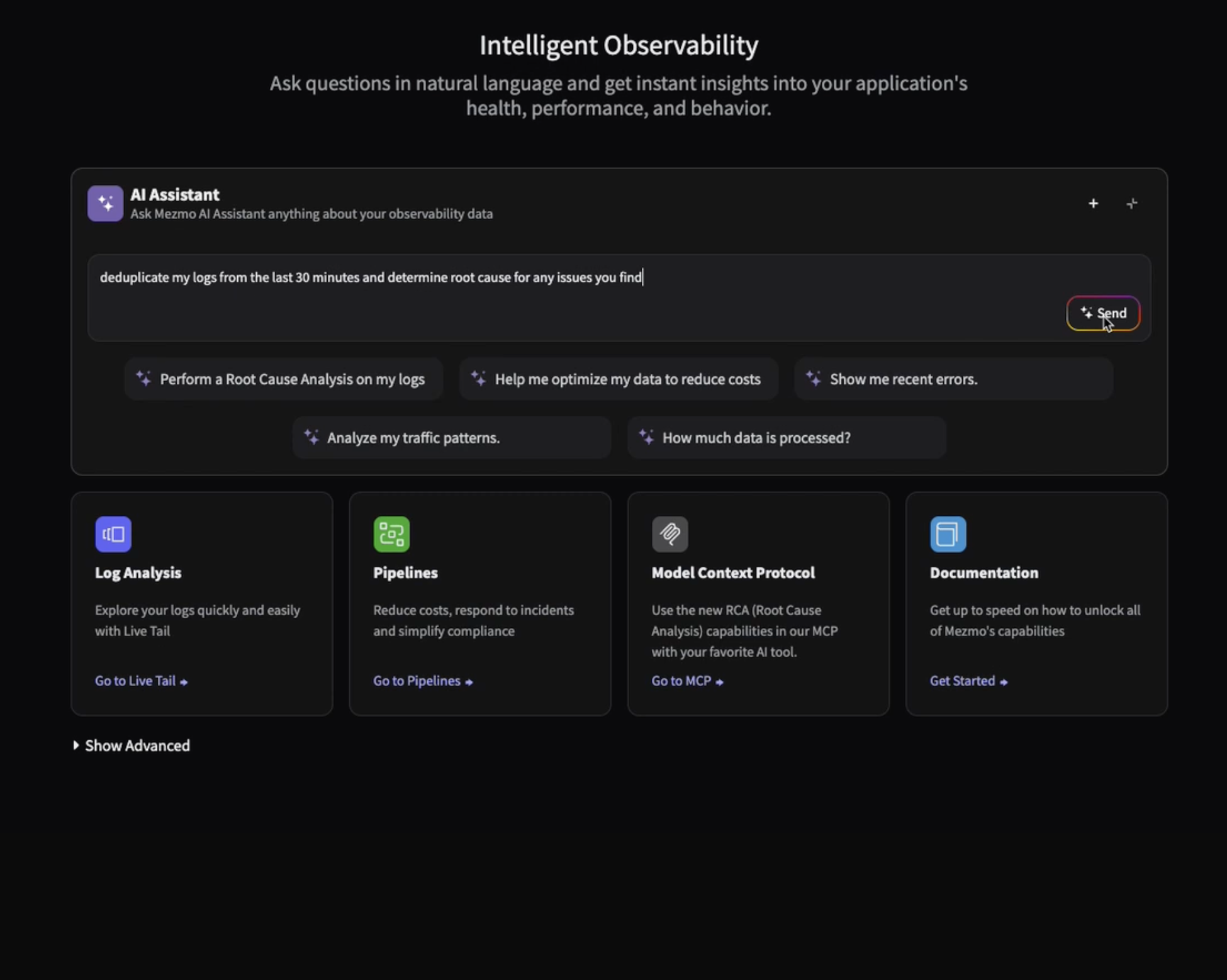

Ready to implement responsive telemetry?

.png)

.jpg)

.png)