Taming Your Dynatrace Bill: How to Cut Observability Costs, Not Visibility

Dynatrace is a powerhouse for application performance monitoring and business analytics. But for many organizations, its power comes with a significant challenge: as applications scale across complex hybrid environments and diverse tech stacks, the sheer volume and variety of logs, metrics, and traces sent to the platform can explode, leading to staggering and unpredictable costs.

This creates a classic observability dilemma. Your developers want to log everything "just in case" for troubleshooting. The result is predictable: you exceed your data plan and pay for overages. Without the technical levers to filter telemetry upstream, you can’t satisfy developers’ need for high-cardinality without absorbing unpredictable costs. For platform and finance teams, observability cost optimization has become a top-tier initiative, driven by the reality that telemetry data growth consistently outpaces the falling cost of storage.

Traditional solutions are full of painful compromises. Aggressively filtering data at the source risks losing critical information needed for an investigation. Archiving data to cold storage like Amazon S3 seems cost-effective, but rehydrating that data is far too slow during a real-time incident, extending downtime and frustrating engineers.

So how do you control costs without sacrificing the visibility your teams need? The answer lies in intelligently controlling your data before it hits your Dynatrace bill.

The Problem: Why Observability Costs Spiral Out of Control

The core issue isn't just data volume; it's data value. A significant portion of the data sent to platforms like Dynatrace is high-volume but low-signal, leading to inflated costs for minimal benefit. Key culprits include:

- Noisy, Low-Value Logs: Verbose DEBUG or INFO logs that are useful during development but create overwhelming noise and cost in production.

- High-Cardinality Metrics: Using complex labels with unique values like pod_id or customer_id can cause the cardinality of metrics to increase exponentially, as each unique combination creates a new time series.

- Redundant Traces: Capturing every single trace from routine system health checks offers little value for troubleshooting but contributes significantly to data volume.

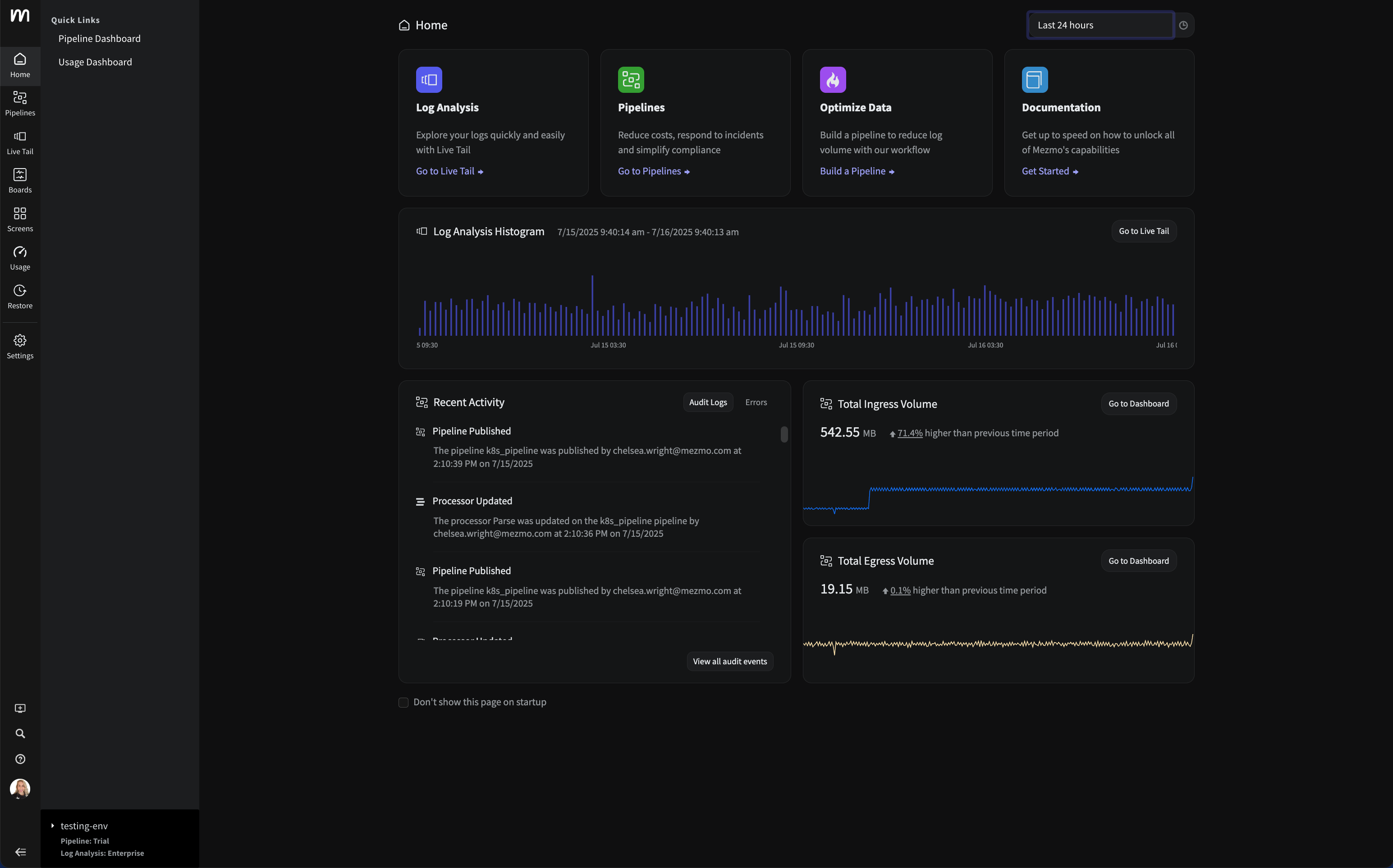

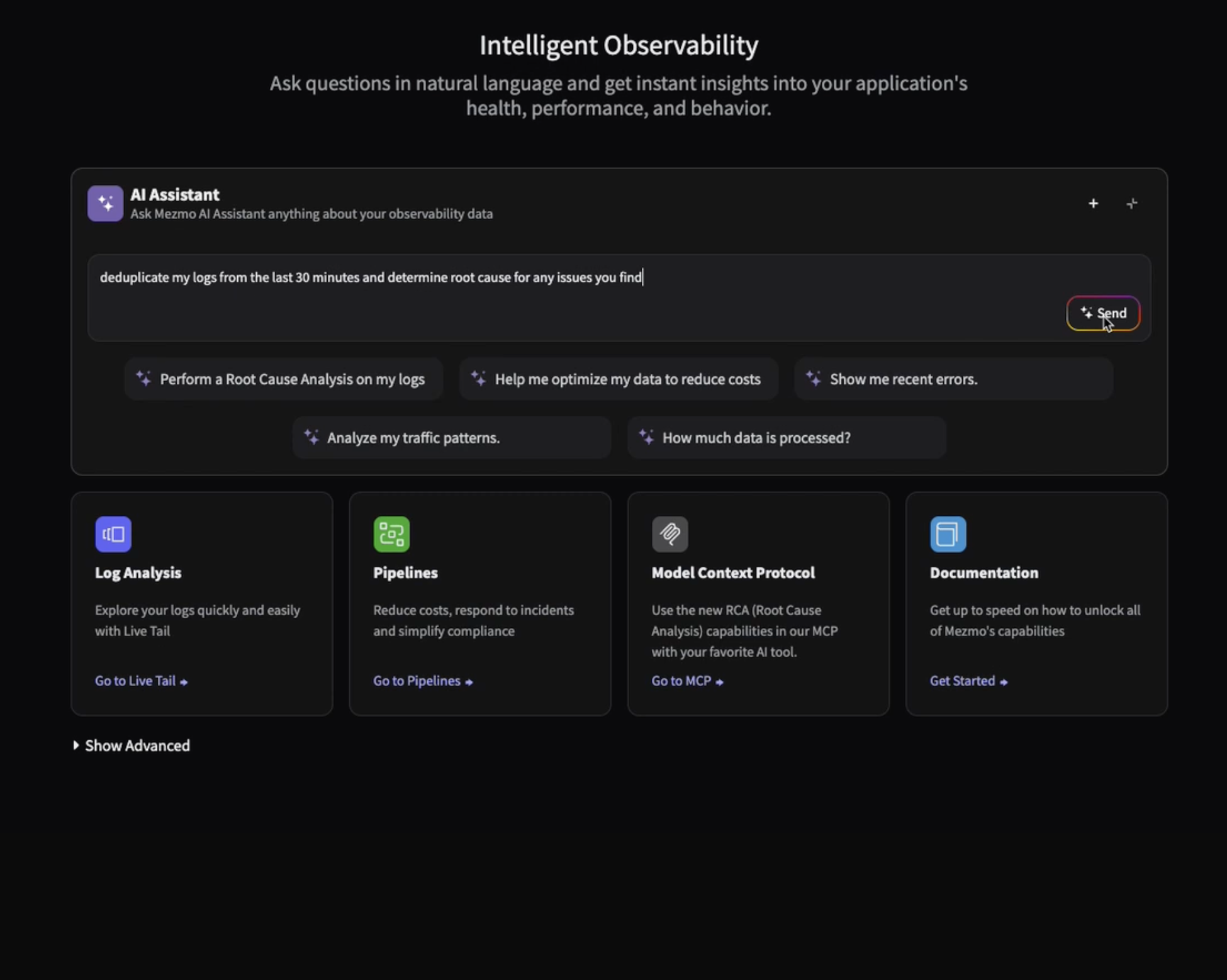

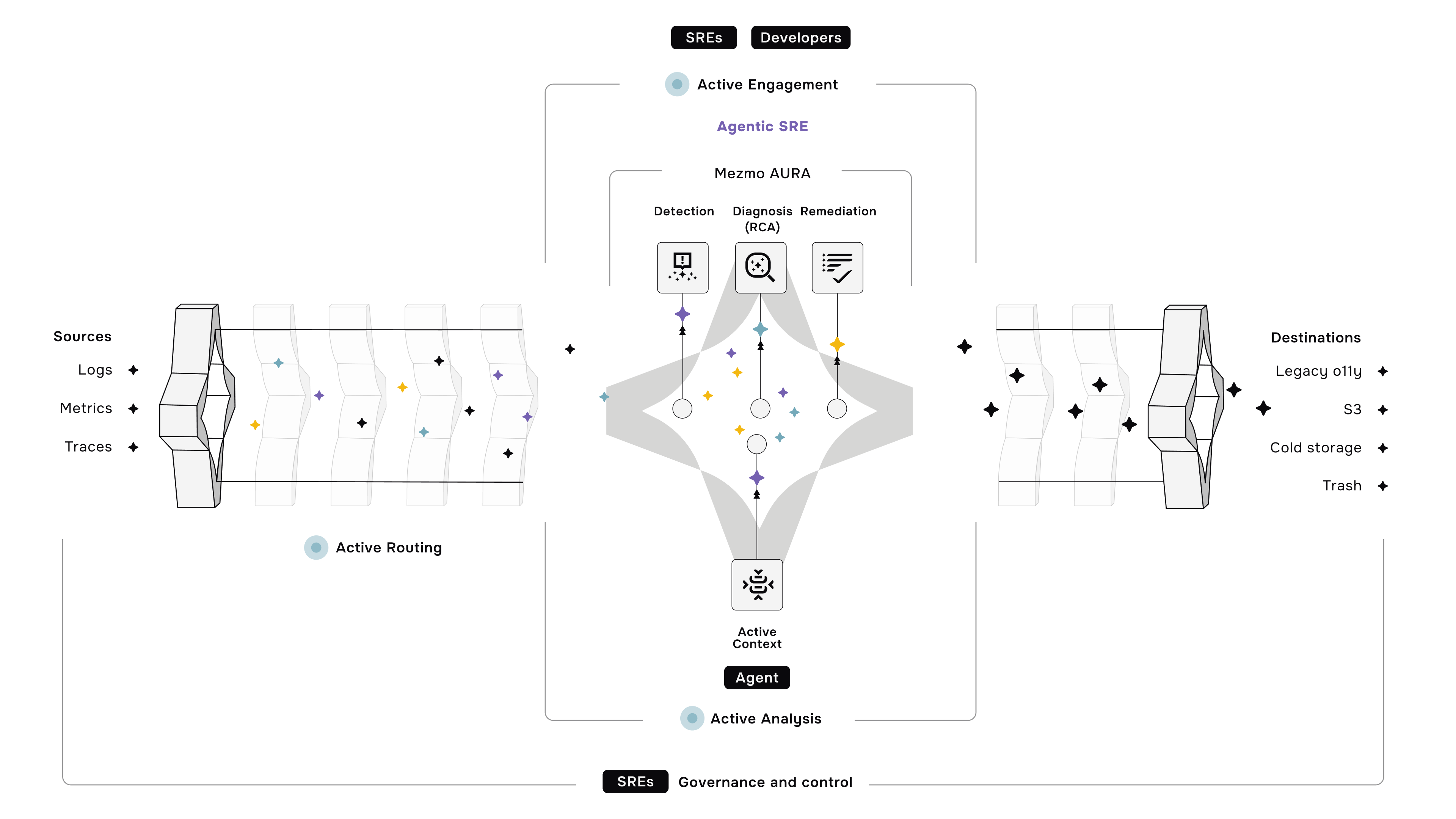

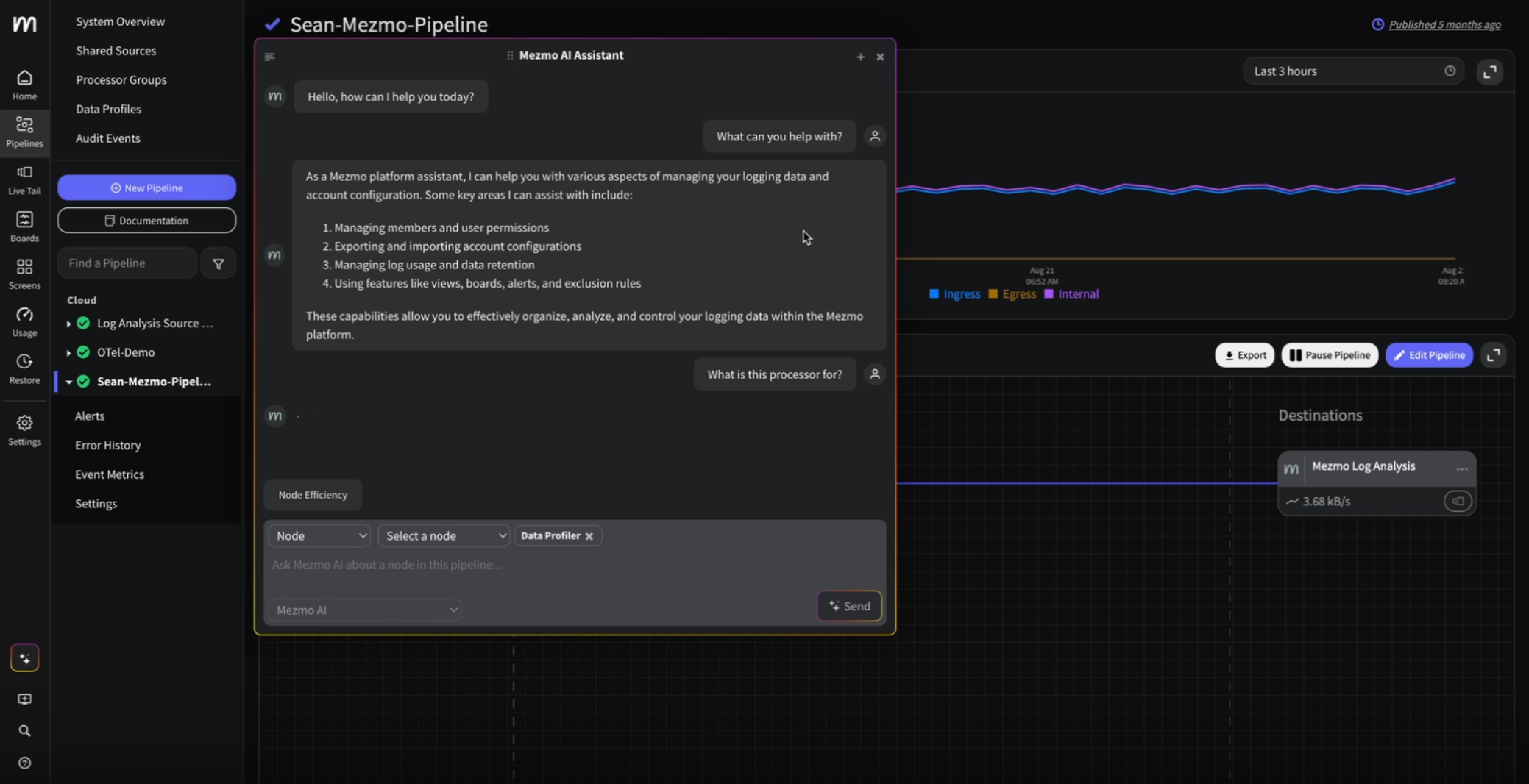

The Solution: Mezmo as Your Intelligent Data Control Plane

Mezmo sits upstream from Dynatrace, acting as an intelligent control plane for your telemetry data. Instead of making risky, all-or-nothing decisions about what to keep, Mezmo allows you to shape, enrich, and route your data, ensuring only high-value signals reach Dynatrace.

Here’s how Mezmo helps you get costs under control:

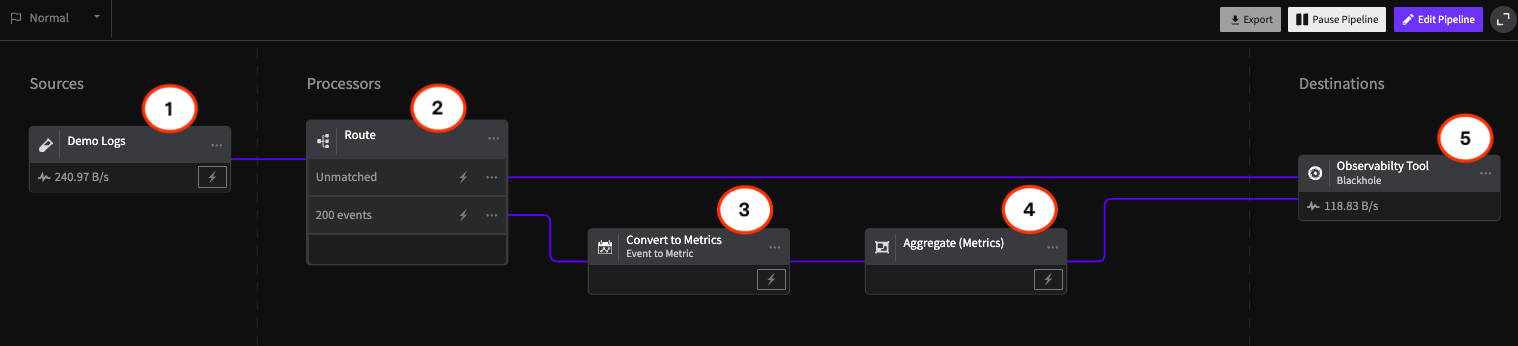

- Profile Data to Find Savings: You can't optimize what you can't see. Mezmo's Data Profiling automatically analyzes your data streams, identifying redundancies, high-cardinality fields, and high-volume sources. This gives you a clear roadmap for what to cut, aggregate, or transform.

- Aggregate Metrics to Slash Costs: One of the biggest cost drivers is custom metrics. With Mezmo's Metric Aggregation & Shaping, you can turn thousands of high-volume custom metrics into a few high-value aggregates (like p95, p99, and average). This slashes costs while improving the quality of your performance signals.

- Manage Cardinality Explosions: Prevent runaway costs with Cardinality Management. Mezmo gives you explicit control to filter and reduce the unique labels attached to your metrics, stopping cost explosions before they happen.

- Filter Smarter, Not Harder: Use Real-time Log Filtering to eliminate high-volume, low-value logs before they are ever sent to Dynatrace. Then, use Tail-Based Sampling for traces to automatically drop noisy, redundant traces from healthy services while guaranteeing you capture 100% of the traces that are important for troubleshooting errors and latency.

Better Together: Dynatrace + Mezmo

By placing Mezmo in front of Dynatrace, you don't replace its power—you amplify it. You get the world-class AI-powered analytics of Dynatrace, fed with a curated, cost-effective, high-signal dataset. It’s the best of both worlds. Netlink Voice, a Mezmo customer, reduced their overall telemetry data volume by 50% using Mezmo's pipelines to filter and parse their data. Watch their story here.

Stop Choosing Between Cost and Context.

With Mezmo, you can intelligently reduce your Dynatrace data volume and costs at the source, without losing the critical information your teams need.

Start your 30-day free trial here.

.png)

.jpg)

.png)