LogDNA vs. Logz.io

LogDNA is now Mezmo but the insights you know and love are here to stay.

What Does Logz.io Do?

Logz.io is a SaaS (software as a service) provider with an observability offering made up of various managed open source technologies. These technologies include the Elastic Stack for logging and SIEM (security information and event management), Prometheus, for monitoring, and Jaeger for tracing. The company positions itself as an alternative to the Elastic Stack (or ELK Stack), which is made up of Elasticsearch, Logstash, Kibana, and Beats. They’ve added in some of their own features like Smart Tiering, which allows users to move older logs to cheaper storage tiers.

Logz.io Disadvantages

If you’re looking for a managed alternative to the Elastic Stack, Logz.io may be a good fit for you. However, if you’ve managed and scaled your own ELK stack before, you know that it has its limitations. The Elastic Stack is not a "set it and forget it" solution. When log volume increases, issues such as slower queries and significantly increased resource usage will begin to emerge. These issues can still rear their heads in a managed service like Logz.io.

Logz.io Log Management Pricing

For Logz.io’s log management offering, their self service plans are priced based on indexed gigabytes (GBs) and retention. They offer log retention ranging from one to forty five days and their daily index rate is 2GBs. Overage pricing, which they call “On-Demand,” is billed at a 40% additional charge.

There is no limit on the number of users on a self service plan and all plans include SOC-2, GDPR, and HIPAA compliance. However, if you need PCI compliance you must engage with them in a custom plan.

If you also want to use their Infrastructure Monitoring, Cloud SIEM, and/or Distributed Tracing products, you must pay for them separately.

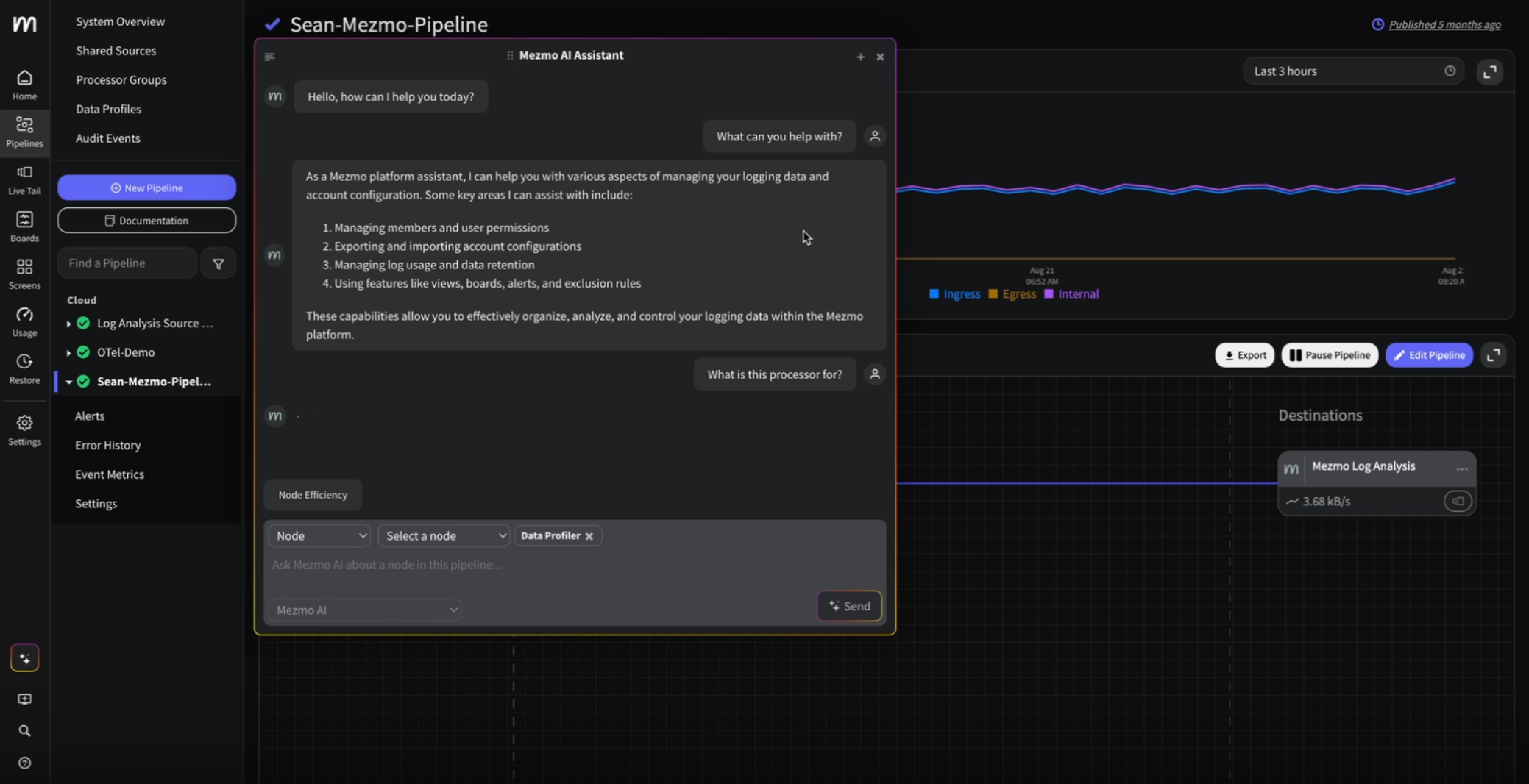

Benefits of Using LogDNA

As noted above, one of the most common struggles with the Elastic Stack is its performance at scale. As the amount of log data that companies produce and consume continues to grow, your log management solution must be able to keep pace. You may experience similar lags in ingestion and queries with managed versions of ELK, like Logz.io.

LogDNA was built to ingest, process, and analyze log data at hyperscale, with some of our customers sending us upwards of four petabytes (PBs) of logs per month. We built our proprietary messaging bus to address the scaling challenges of Logstash. It intelligently detects log types, automatically parses them, and indexes them in a way that makes keyword search fast and easy to use. For non-standard log types, Custom Parsing rules can be configured for any proprietary formats.

Being able to handle log data at scale is only one part of the equation. You must also be able to understand and control the volume of data that you store. LogDNA offers multiple control features to self service customers including Exclusion Rules, which allow you to filter out logs by sources, by apps, or by specific queries that you want to see in Live Tail and be alerted on, but that you don’t want to store. Since we charge based on retention, these logs won’t count towards your bill. You can also automatically archive logs to Amazon S3, IBM Cloud Object Storage, Google Cloud Storage, Azure Blob Storage, and more.

For enterprise customers who need advanced control features, LogDNA launched the Spike Protection Bundle, which includes Index Rate Alerting and Usage Quotas. When there’s an unexpected spike in ingestion, Index Rate Alerting provides actionable insight into which applications, sources, or tags produced the data spike, as well as any recently added sources. Usage Quotas let you specify soft and hard thresholds for the volume of log files to store. When the threshold is met, LogDNA will notify you and apply your defined configurations.

Earlier this month, we announced our early access program for Variable Retention, which gives you the flexibility to save logs in LogDNA only for the amount of time that they're relevant. This makes it possible to ingest new types of log data while keeping cost under control, allowing teams to leverage their logs for even more use cases. Our engineering team has even more control features on the product roadmap that will become available over the course of the next year.

.png)

.jpg)

.png)

.png)