Why Your Telemetry (Observability) Pipelines Need to be Responsive

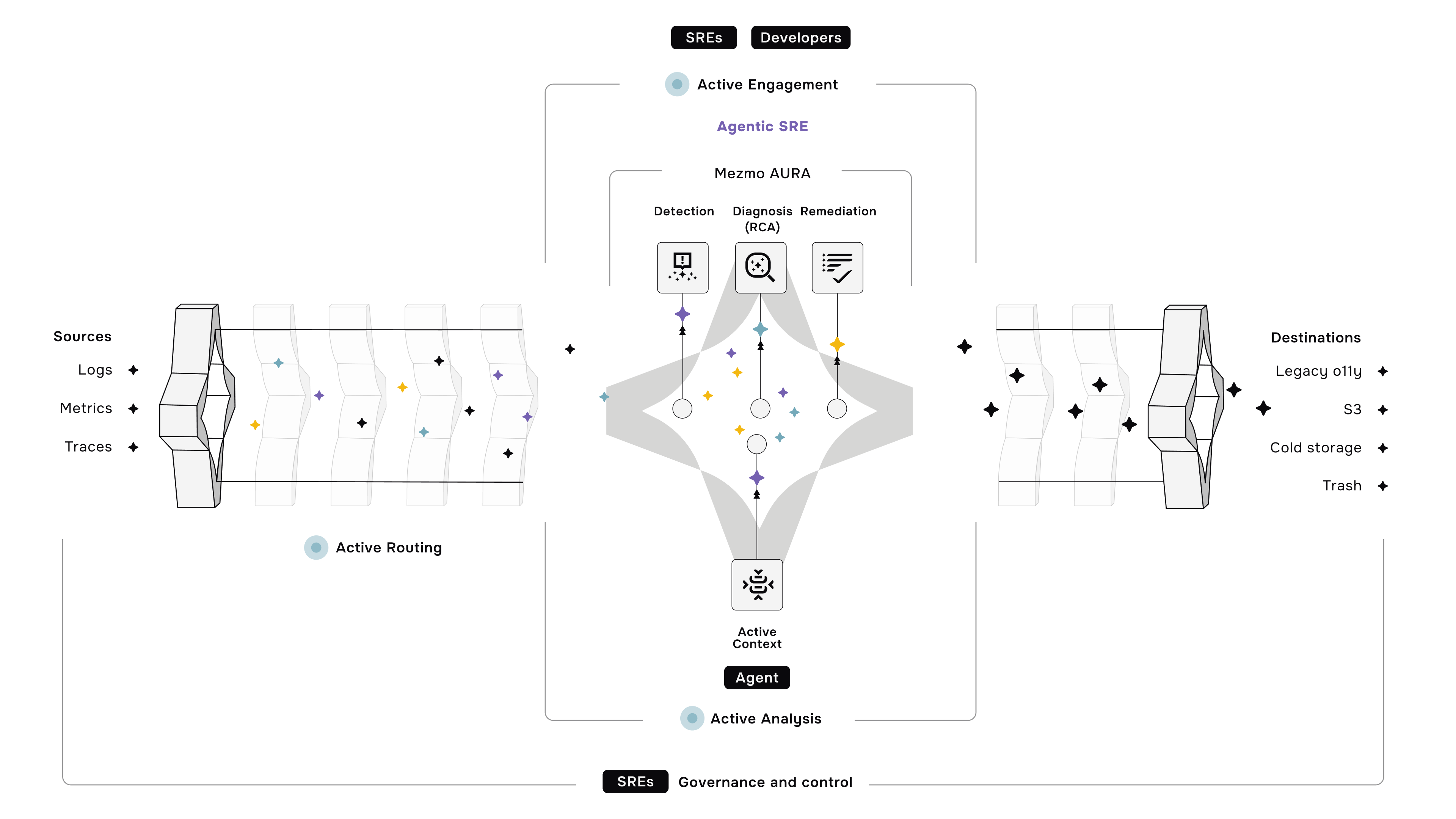

At Mezmo, we consider Understand, Optimize, and Respond, the three tenets that help control telemetry data and maximize the value derived from it.

- Understanding telemetry data means knowing your data’s origin, content, and patterns so you can discern signals from noise.

- Optimizing telemetry data reduces costs and increases data value by selectively filtering, routing, transforming, and enriching data.

- Responding refers to ensuring that teams always have the correct data if an incident happens or if data changes. In that case, either pipeline can be reconfigured automatically, or users can be alerted of changes.

We have previously discussed data Understanding and Optimization in depth. This blog discusses the need for responsive pipelines and what it takes to design them.

The business environment is never static. Business requirements change often, initiating changes in goals and processes, and technologies required to achieve those goals. This change impacts the data generated and the data requirements for decision-making. Your observability, security, and AI/ML tools rely on trusted data to deliver reliable insights. Lack of trust in data impacts the confidence in the downstream systems and decisions made using that data.

Telemetry Pipelines help collect, profile, transform, and route telemetry data for better control and cost-effective observability and security, as well as fuel the AI/ML initiatives. Any changes in data sources or data drifts in the sources may cause unintended consequences. Take for example, a developer who updates a code and that generates unanticipated log volumes that suddenly spike a Splunk or DataDog bill into millions of dollars. How can that be prevented?

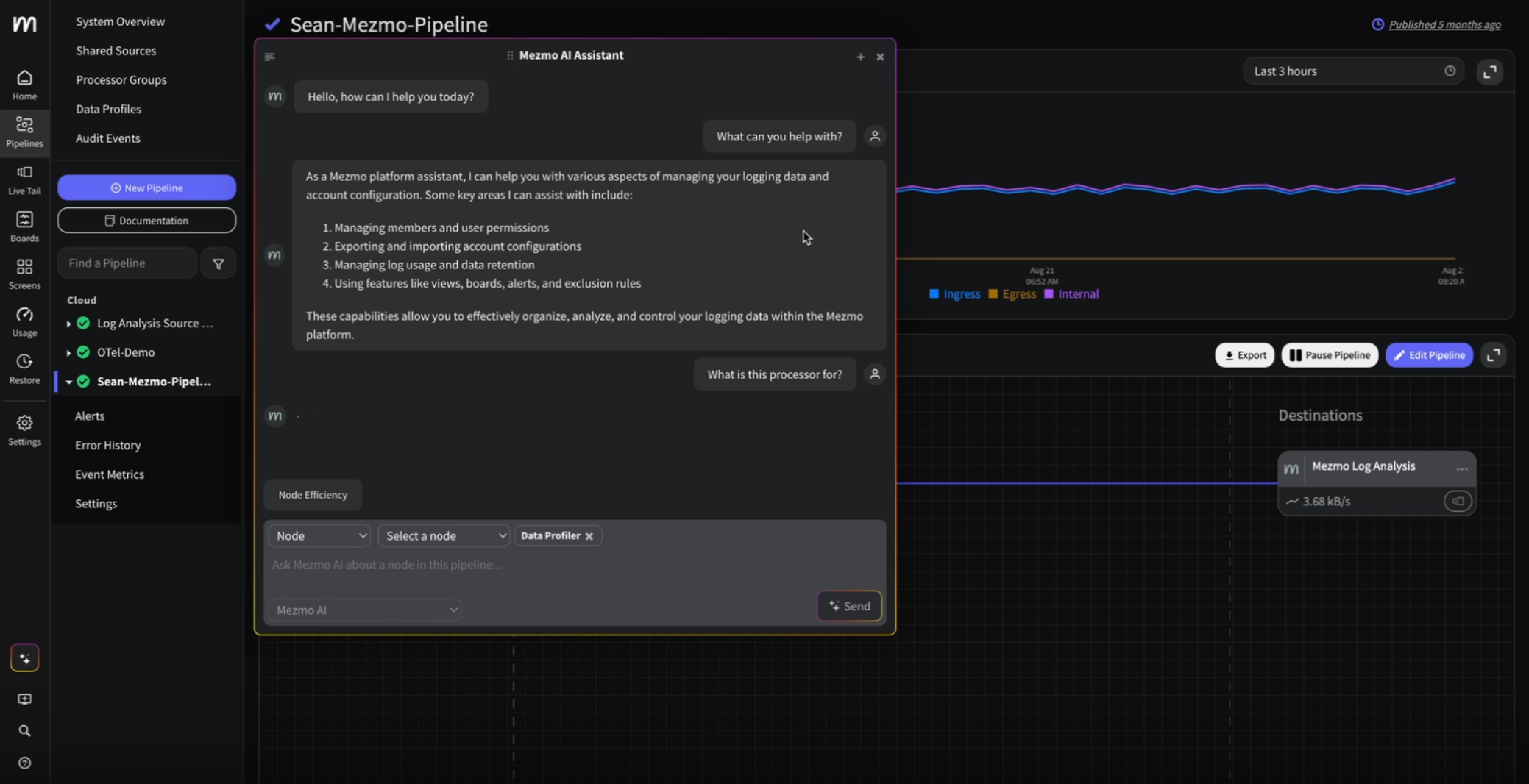

To address such changes, the pipelines must deliver more than data transformation and movement. Pipelines must be smart enough to detect such aberrations and alert teams when such changes occur. The Mezmo pipeline is stateful and supports in-stream alerts, aka on data in motion. Mezmo support alerts such as:

- Threshold Alerts: Mezmo Telemetry Pipeline will analyze data and alert on any metric derived from unstructured logs or rollup of a metric based on user-defined thresholds. For example, Mezmo will alert when data volume reaches a specific threshold or when an application has exceeded a predefined number of errors.

- Change Alerts: Mezmo Telemetry Pipeline will compare the absolute or relative percentage change in value between the current and prior intervals for a metric and alert when a metric change is above or below the user-defined threshold. This will help customers detect sudden surges in data volume and enable them to throttle the data from certain sources to prevent overages automatically.

- Absence alerts: The Mezmo Telemetry Pipeline can also be configured to alert when something expected doesn’t happen, such as when an expected file is missing.

Alerting on data-in-motion is a key component of being responsive and taking timely action.

Take another situation. You may use a telemetry pipeline to reduce your log volumes before sending them to Splunk to manage your spending. You have determined that 40 percent of the data is good enough in a steady state. But suddenly, one day, your SIEM system detects an incident, and you need all the data to resolve that issue. In such a situation, pipelines must reroute the data and send full-fidelity information to SIEM until the issue is resolved.

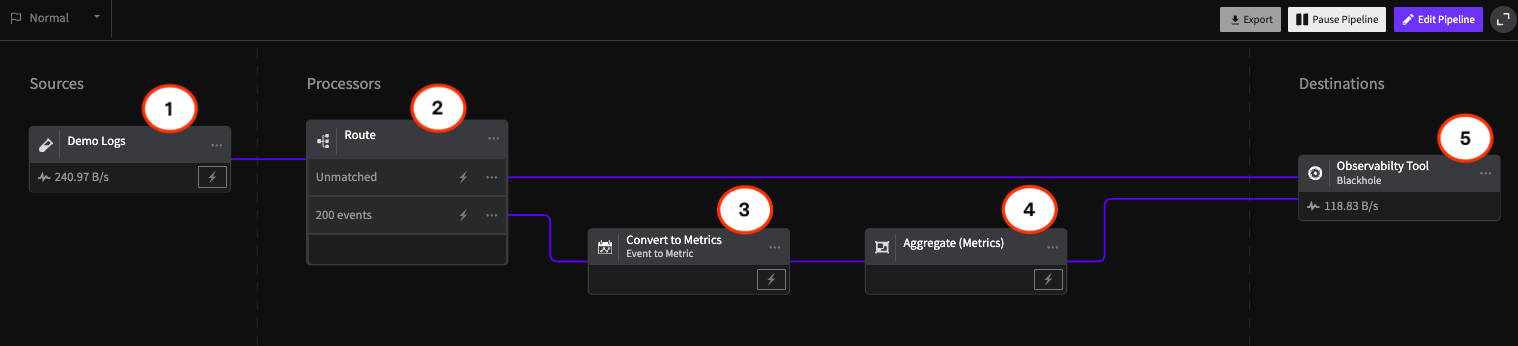

In the screens below, you can see that in the “Normal Mode,” the pipeline is significantly reducing telemetry volume to save costs. When an incident occurs, the Pipeline can be automatically triggered into an “Incident Mode” that reconfigures the pipeline to immediately route detailed telemetry data to your SIEM - enabling fast problem diagnosis and resolution.

In incident mode, processors that usually filter or summarize data can be paused. This pipeline configuration change can be initiated through an API call or via a manual configuration action if needed. Using the pipeline configuration options, pipeline elements can be preconfigured to respond in any way needed, including re-routing telemetry to different destinations to help your team quickly get service reliability back on track.

Finally, consider that you added a new data source, and unbeknownst to you, PII data such as email IDs and credit card numbers embedded in logs are being sent to your analytics systems, putting you at risk of non-compliance. A telemetry pipeline should be able to detect such sensitive data and either alert you or, even better, encrypt or redact that information automatically before it reaches any system.

These are just a few examples of how responsive pipelines can help your business be agile, prevent bad incidents, and reduce incident resolution times. Modern telemetry pipelines must go beyond data collection, routing, and control to shift the logic into the pipeline, help detect issues, and enable timely actions.

.png)

.jpg)

.png)