The Grok-to-AI Evolution: Why Modern SREs Are Moving Beyond Manual Parsing

Grok structures logs. Context engineering connects systems. AI explains behavior.

For years, Grok patterns have been the workhorse of the SRE world. Built on regular expressions, Grok helps teams extract structure from unstructured logs. As we explored in "Do You Grok It?", Grok is the key to turning messy log lines into usable fields. It's why our Grok Pattern Reference remains one of our most-visited resources — SREs are hungry for structure.

But in modern, ephemeral systems, a hard truth has emerged:

Grok scales linearly. System complexity scales exponentially.

If you're still spending on-call shifts fixing brittle parsing rules because a developer changed a log format by one character, you're not doing SRE work — you're maintaining a parsing layer.

The Problem: Grok Is a Mirror, Not a Map

Grok patterns are reactive. They depend on you knowing exactly what log data will look like before something breaks. That creates a growing visibility tax:

The Maintenance Tax Every service change risks breaking Grok patterns — and the regex they rely on — across your telemetry pipeline.

The "Unknown Unknown" Blind Spot Grok only extracts what you define. If a new failure mode appears with a different log signature, your pipeline doesn't adapt — and your alerts stay silent.

The Talent Drain You didn't become an SRE to spend your week updating parsing logic.

Grok gives you structured fields. But fields alone don't explain system behavior.

The Missing Layer: Context Engineering

The solution isn't to write better Grok patterns. It's to stop asking Grok to do a job it was never designed for.

Before AI can meaningfully help with Root Cause Analysis, your telemetry needs more than parsing — it needs context.

Context engineering is the step beyond Grok. It transforms raw telemetry into high-signal, behavior-ready data by:

- Normalizing structure across logs, metrics, and traces — not just extracting fields, but ensuring they mean the same thing across your entire stack

- Preserving relationships between events, services, and deployments — so you can trace a cascade, not just see isolated errors

- Enriching data with operational and environmental metadata — turning error_code=500 into "500 from the checkout service, during deployment v2.4.1, in us-east-1, 30 seconds after a config change"

- Reducing noise while keeping investigative detail intact — filtering out the irrelevant without losing the trail when you need to dig deeper

Instead of just extracting fields like status=500, context engineering helps answer:

- Which service emitted this?

- What changed right before this started?

- Is this isolated or part of a broader pattern?

Parsing tells you what a line says. Context engineering helps explain what the system is doing.

The Evolution: From Parsing Logs to Understanding Systems With AI SREs

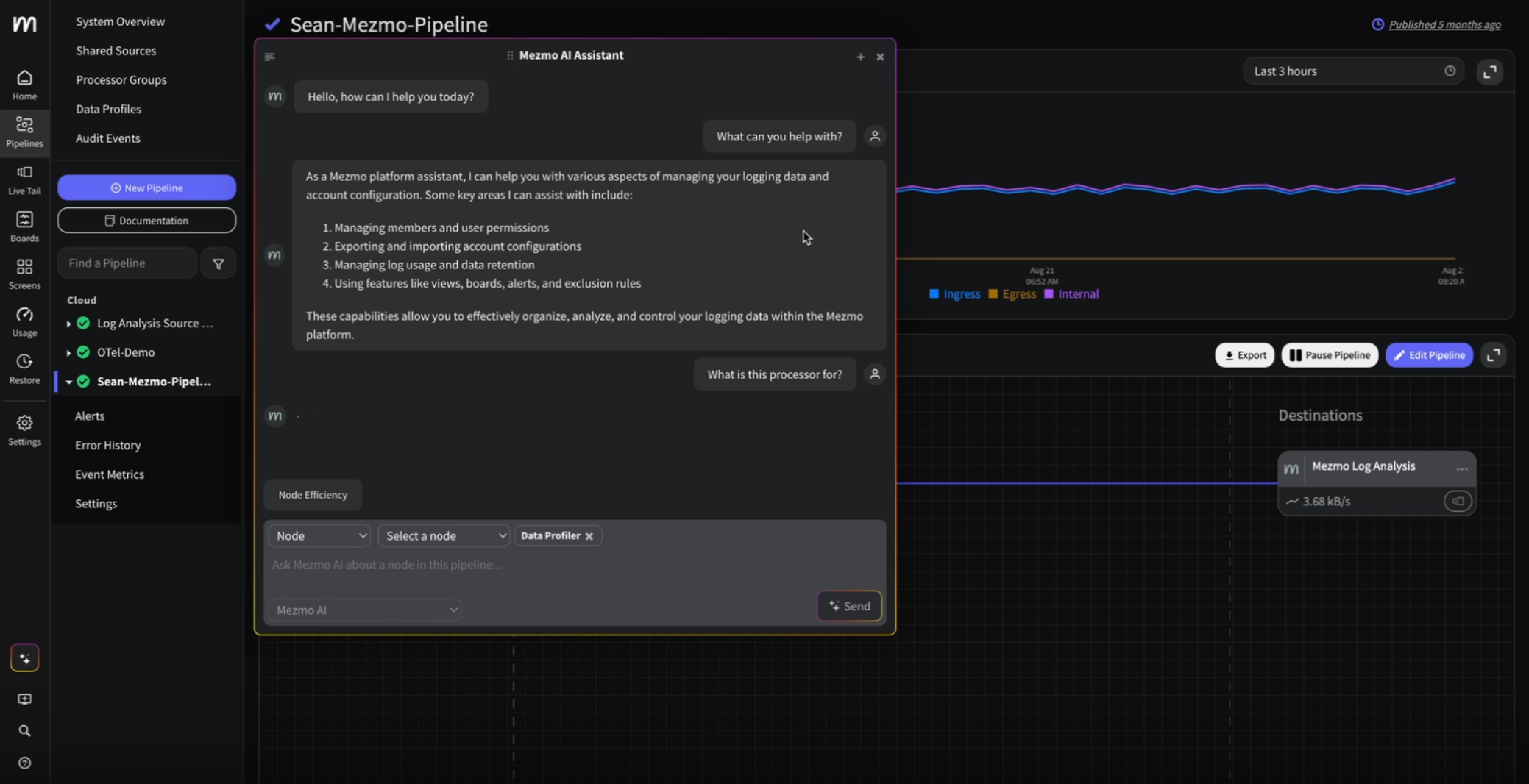

At Mezmo, we've already begun bridging the gap with AI-assisted pattern generation in our Parse Processor, helping teams create Grok patterns and regex more efficiently.

But the real evolution isn't just automating pattern creation. It's shifting from:

Log ingestion → manual parsing → human investigation

to:

Log ingestion → context engineering → AI-assisted RCA

Why This Matters for Root Cause Analysis

AI without context is just a faster way to read logs. AI with context can understand systems.

When telemetry is context-engineered, AI SRE systems can correlate:

- A latency spike

- A deployment 12 minutes earlier

- A configuration drift in one availability zone

- A change in error distribution across services

These relationships rarely live in a single log line or a single Grok pattern. They emerge from connected, enriched telemetry.

You move from:

"What does this log line say?" to "What changed in my system, and why?"

Stop Managing Parsing Rules. Start Engineering Context.

You didn't become an SRE to spend your week updating parsing logic.

Modern observability isn't about building the biggest library of Grok patterns. It's about creating telemetry that's ready for both humans and AI to reason over.

If your team is stuck in a cycle of updating parsing rules every time formats drift, it may be time to move up the stack:

From parsing → to context → to understanding.

Grok extracts fields. Context engineering builds meaning. AI SRE accelerates understanding.

See how Mezmo's AI SRE for Root Cause Analysis bridges the gap from raw logs to automated investigation—with context engineering built in from the ground up.

Similar blog posts