How to Leverage Mezmo Archiving

LogDNA is now Mezmo but the product you know and love is here to stay.

Log archiving is the process of storing all kinds of logs (application, system, or monitoring) from across a multitude of systems in a long-term storage solution like S3. Securely collecting and keeping logs is crucial for many businesses, and they have to do it effectively and with minimal supervision.

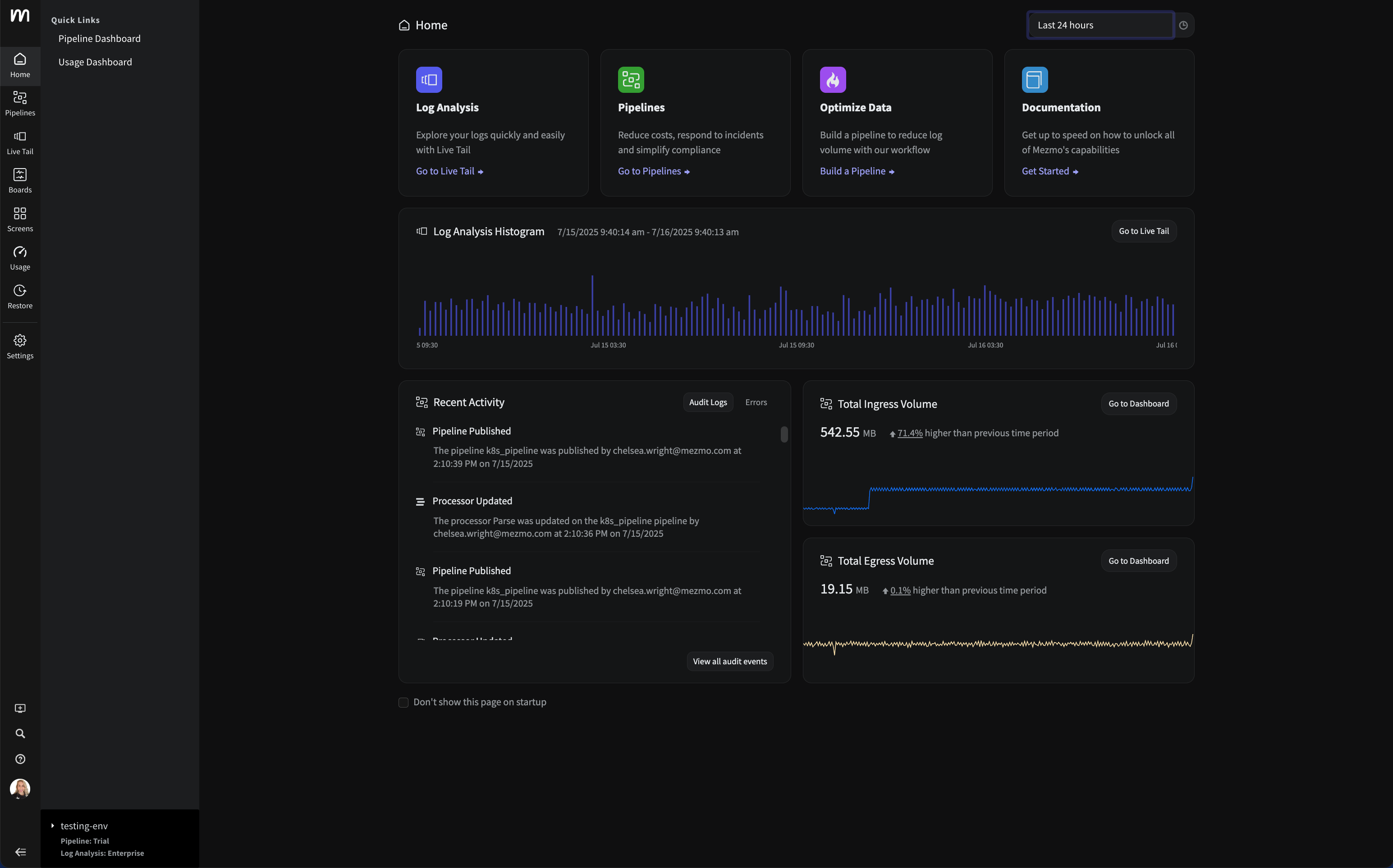

This tutorial will show you how to leverage Mezmo's archiving feature. We’ll walk you through the process of setting up automatic archiving following the official documentation. We’ll also explain why you should archive logs and what other benefits you can gain from doing so. Then, we’ll explore Mezmo's new variable retention feature, which allows users to retain certain logs for various periods and then send them to archiving, thereby maximizing cost efficiency. Finally, we will introduce you to an upcoming feature called rehydration, which will allow you to send previously archived logs back to the platform for further analysis.

Let’s get started.

Why Archive Logs?

You may be asking yourself, “why is archiving logs so important, anyway?” Well, it depends on what kind of business you have and what type of data you are processing.

For some organizations, log retention and archiving is of secondary importance, and is typically done to provide historical context. Others might need logs for security purposes, such as tracking network logs for signs of compromise when an incident occurs.

However, many organizations have to archive logs per regulatory compliance rules and regulations. As an example, HIPAA compliance mandates that logs should be kept for a minimum of six years in a specific format. PCI DSS on the other hand requires a shorter period (1 year minimum). In either case, companies have to adhere to appropriate log retention policies for auditors, demonstrate efficient security and storage requirements, or face hefty fines.

A log archiving tool can help your organization adhere to these requirements by letting you save your log messages to files, disks, or any other storage medium. In an ideal world, you would store only the minimum log data required with minimal supervision, but with the flexibility to go back and search through the logs easily.

How to Archive Logs with Mezmo

Archiving logs is straightforward with Mezmo. All you need is a supported S3 block storage provider with the proper permissions, and Mezmo will handle the rest.

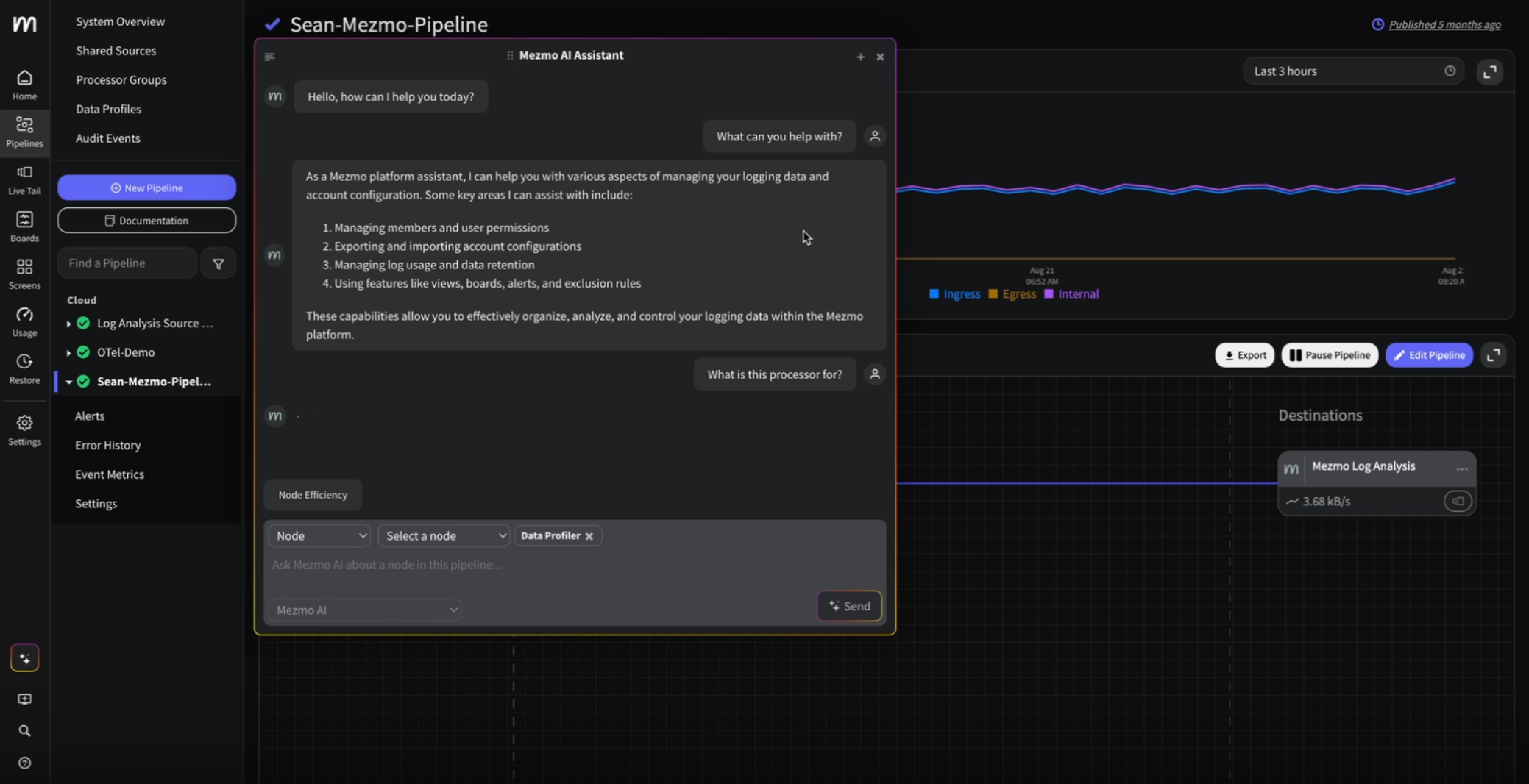

First, you need to enable archiving for your account, as shown in this screenshot:

Once you've enabled archiving, you'll have the option to select a block storage provider. Mezmo supports all major cloud providers, including Amazon, GCloud, and Azure. For simplicity, we will use DigitalOcean block storage for this tutorial.

Next, you'll have to configure settings based on your chosen provider. For DigitalOcean, you'll need the name, endpoint, and access/secret key pairs of the DigitalOcean Spaces. Just the bucket ARN will do for S3 (assuming that you have the correct bucket permissions). For Azure, you will have to fill in the account name and key fields.

Enter the configuration settings for your selected provider. Make sure that the endpoint name is in the correct format. For example:

fra1.digitaloceanspaces.com

Otherwise, the settings won’t save.

That's it! Now, Mezmo will segment and send your logs hourly in a compressed format. However, it might take a day to see the logs in the block storage for the first time.

You can access the stored files by navigating to the block storage provider and inspecting the gzipped files:

If you want to prevent certain log lines from being logged and subsequently archived (for example, lines from a particular host or app), you may want to configure exclusion rules using the appropriate option in the Manage Usage tab:

Now that you've configured archiving, you can check out what the new variable retention feature has to offer.

Variable Retention

Once you've set up your archive, you might want to have more control over when your logs are archived in the block storage, or kept in live dashboards, because you cannot have all data live simultaneously without inevitable logistical tradeoffs. Logging can be expensive, and keeping massive amounts of data in memory causes all sorts of problems with locality, cost-efficiency, and insights.

Variable retention comes into play here, enabling teams to control which logs they want to keep in the live dashboard, and which logs they want to archive sooner. Enterprise Mezmo customers can sign up for the private beta program, which gives them access to four tiers of retention times:3, 7, 14, or 30 days.

As a reminder, Mezmo customers have a fixed retention time depending on their current plan. With variable retention, you gain the option to configure further the log retention time based on rules like hosts, apps, levels, or custom queries, as shown in this screenshot:

Mezmo is also getting ready to introduce another new feature called rehydration. We'll explain what that is and how it helps in the next section.

Rehydration

Once you've had data archived in your block storage for a while, you might want to analyze it using the Mezmo dashboard, which offers superb visualization capabilities. Or maybe you've experienced an incident that involves tracing network logs spanning multiple periods, and want to create reports.

Rehydration will let you send your archived logs back into LogDNA for delayed root cause analysis or auditing. That would be enormously useful if your organization was being audited and needed to produce logs on the fly. The IT team would then be capable of configuring rehydration rules to showcase and print historical logged events within the Mezmo dashboard.

You can sign up for Mezmo's beta program for log data restoration here.

Next Steps with Observability and Logging

You should bookmark Mezmo's blog resources to keep up-to-date with relevant industry news concerning observability and logging best practices. Adopting Mezmo as your leading logging service is a great way to help your DevOps teams by providing a trusted tool to handle all of their logging needs.

.png)

.jpg)

.png)

.png)